Author Archive

The shape of things to come: an essay on probability in project estimation

Introduction

Project estimates are generally based on assumptions about future events and their outcomes. As the future is uncertain, the concept of probability is sometimes invoked in the estimation process. There’s enough been written about how probabilities can be used in developing estimates; indeed there are a good number of articles on this blog – see this post or this one, for example. However, most of these writings focus on the practical applications of probability rather than on the concept itself – what it means and how it should be interpreted. In this article I address the latter point in a way that will (hopefully!) be of interest to those working in project management and related areas.

Uncertainty is a shape, not a number

Since the future can unfold in a number of different ways one can describe it only in terms of a range of possible outcomes. A good way to explore the implications of this statement is through a simple estimation-related example:

Assume you’ve been asked to do a particular task relating to your area of expertise. From experience you know that this task usually takes 4 days to complete. If things go right, however, it could take as little as 2 days. On the other hand, if things go wrong it could take as long as 8 days. Therefore, your range of possible finish times (outcomes) is anywhere between 2 to 8 days.

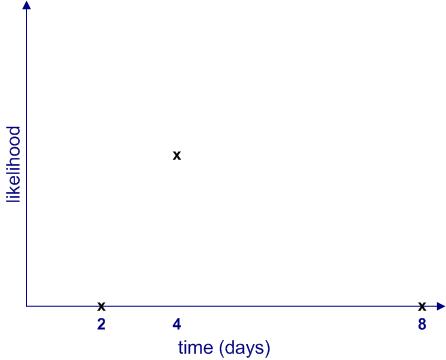

Clearly, each of these outcomes is not equally likely. The most likely outcome is that you will finish the task in 4 days. Moreover, the likelihood of finishing in less than 2 days or more than 8 days is zero. If we plot the likelihood of completion against completion time, it would look something like Figure 1.

Figure 1 begs a couple of questions:

- What are the relative likelihoods of completion for all intermediate times – i.e. those between 2 to 4 days and 4 to 8 days?

- How can one quantify the likelihood of intermediate times? In other words, how can one get a numerical value of the likelihood for all times between 2 to 8 days? Note that we know from the earlier discussion that this must be zero for any time less than 2 or greater than 8 days.

The two questions are actually related: as we shall soon see, once we know the relative likelihood of completion at all times (compared to the maximum), we can work out its numerical value.

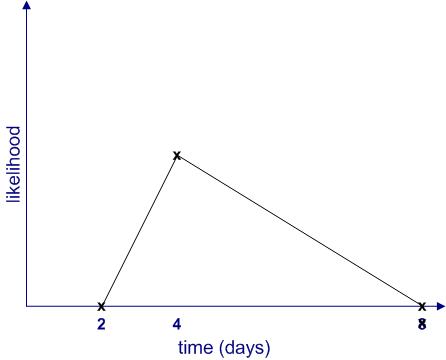

Since we don’t know anything about intermediate times (I’m assuming there is no historical data available, and I’ll have more to say about this later…), the simplest thing to do is to assume that the likelihood increases linearly (as a straight line) from 2 to 4 days and decreases in the same way from 4 to 8 days as shown in Figure 2. This gives us the well-known triangular distribution.

Note: The term distribution is simply a fancy word for a plot of likelihood vs. time.

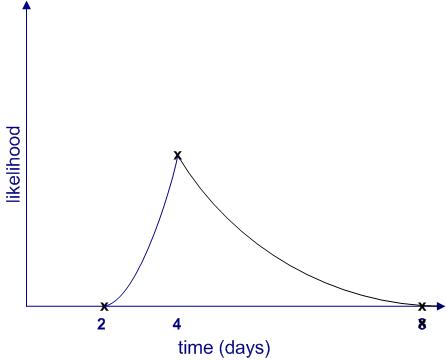

Of course, this isn’t the only possibility; there are an infinite number of others. Figure 3 is another (admittedly weird) example.

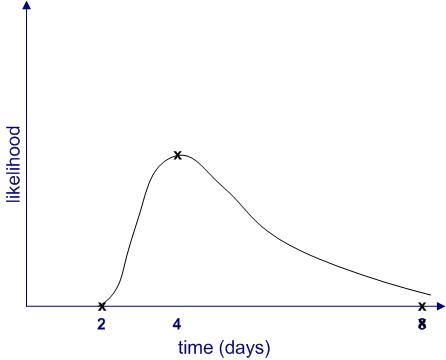

Further, it is quite possible that the upper limit (8 days) is not a hard one. It may be that in exceptional cases the task could take much longer (say, if you call in sick for two weeks) or even not be completed at all (say, if you leave for that mythical greener pasture). Catering for the latter possibility, the shape of the likelihood might resemble Figure 4.

From the figures above, we see that uncertainties are shapes rather than single numbers, a notion popularised by Sam Savage in his book, The Flaw of Averages. Moreover, the “shape of things to come” depends on a host of factors, some of which may not even be on the radar when a future event is being estimated.

Making likelihood precise

Thus far, I have used the word “likelihood” without bothering to define it. It’s time to make the notion more precise. I’ll begin by asking the question: what common sense properties do we expect a quantitative measure of likelihood to have?

Consider the following:

- If an event is impossible, its likelihood should be zero.

- The sum of likelihoods of all possible events should equal complete certainty. That is, it should be a constant. As this constant can be anything, let us define it to be 1.

In terms of the example above, if we denote time by and the likelihood by

then:

for

and

And

where

Where denotes the sum of all non-zero likelihoods – i.e. those that lie between 2 and 8 days. In simple terms this is the area enclosed by the likelihood curves and the x axis in figures 2 to 4. (Technical Note: Since

is a continuous variable, this should be denoted by an integral rather than a simple sum, but this is a technicality that need not concern us here)

is , in fact, what mathematicians call probability– which explains why I have used the symbol

rather than

. Now that I’ve explained what it is, I’ll use the word “probability” instead of ” likelihood” in the remainder of this article.

With these assumptions in hand, we can now obtain numerical values for the probability of completion for all times between 2 and 8 days. This can be figured out by noting that the area under the probability curve (the triangle in figure 2 and the weird shape in figure 3) must equal 1. I won’t go into any further details here, but those interested in the maths for the triangular case may want to take a look at this post where the details have been worked out.

The meaning of it all

(Note: parts of this section borrow from my post on the interpretation of probability in project management)

So now we understand how uncertainty is actually a shape corresponding to a range of possible outcomes, each with their own probability of occurrence. Moreover, we also know, in principle, how the probability can be calculated for any valid value of time (between 2 and 8 days). Nevertheless, we are still left with the question as to what a numerical probability really means.

As a concrete case from the example above, what do we mean when we say that there is 100% chance (probability=1) of finishing within 8 days? Some possible interpretations of such a statement include:

- If the task is done many times over, it will always finish within 8 days. This is called the frequency interpretation of probability, and is the one most commonly described in maths and physics textbooks.

- It is believed that the task will definitely finish within 8 days. This is called the belief interpretation. Note that this interpretation hinges on subjective personal beliefs.

- Based on a comparison to similar tasks, the task will finish within 8 days. This is called the support interpretation.

Note that these interpretations are based on a paper by Glen Shafer. Other papers and textbooks frame these differently.

The first thing to note is how different these interpretations are from each other. For example, the first one offers a seemingly objective interpretation whereas the second one is unabashedly subjective.

So, which is the best – or most correct – one?

A person trained in science or mathematics might claim that the frequency interpretation wins hands down because it lays out an objective, well -defined procedure for calculating probability: simply perform the same task many times and note the completion times.

Problem is, in real life situations it is impossible to carry out exactly the same task over and over again. Sure, it may be possible to do almost the same task, but even straightforward tasks such as vacuuming a room or baking a cake can hold hidden surprise (vacuum cleaners do malfunction and a friend may call when one is mixing the batter for a cake). Moreover, tasks that are complex (as is often the case in the project work) tend to be unique and can never be performed in exactly the same way twice. Consequently, the frequency interpretation is great in theory but not much use in practice.

“That’s OK,” another estimator might say,” when drawing up an estimate, I compared it to other similar tasks that I have done before.”

This is essentially the support interpretation (interpretation 3 above). However, although this seems reasonable, there is a problem: tasks that are superficially similar will differ in the details, and these small differences may turn out to be significant when one is actually carrying out the task. One never knows beforehand which variables are important. For example, my ability to finish a particular task within a stated time depends not only on my skill but also on things such as my workload, stress levels and even my state of mind. There are many external factors that one might not even recognize as being significant. This is a manifestation of the reference class problem.

So where does that leave us? Is probability just a matter of subjective belief?

No, not quite: in reality, estimators will use some or all of three interpretations to arrive at “best guess” probabilities. For example, when estimating a project task, a person will likely use one or more of the following pieces of information:

- Experience with similar tasks.

- Subjective belief regarding task complexity and potential problems. Also, their “gut feeling” of how long they think it ought to take. These factors often drive excess time or padding that people work into their estimates.

- Any relevant historical data (if available)

Clearly, depending on the situation at hand, estimators may be forced to rely on one piece of information more than others. However, when called upon to defend their estimates, estimators may use other arguments to justify their conclusions depending on who they are talking to. For example, in discussions involving managers, they may use hard data presented in a way that supports their estimates, whereas when talking to their peers they may emphasise their gut feeling based on differences between the task at hand and similar ones they have done in the past. Such contradictory representations tend to obscure the means by which the estimates were actually made.

Summing up

Estimates are invariably made in the face of uncertainty. One way to get a handle on this is by estimating the probabilities associated with possible outcomes. Probabilities can be reckoned in a number of different ways. Clearly, when using them in estimation, it is crucial to understand how probabilities have been derived and the assumptions underlying these. We have seen three ways in which probabilities are interpreted corresponding to three different ways in which they are arrived at. In reality, estimators may use a mix of the three approaches so it isn’t always clear how the numerical value should be interpreted. Nevertheless, an awareness of what probability is and its different interpretations may help managers ask the right questions to better understand the estimates made by their teams.

“The Heretic’s Guide to Best Practices” wins bronze at the 5th Annual Axiom Business Book Awards.

I’m delighted to announce that the book that Paul Culmsee and I published recently has been awarded a bronze medal at the Axiom Business Book Awards for 2012, under the category Operations Management/Lean/Continuous Improvement.

We are truly honoured that the panel found our efforts worthy of an award.

If you are interested in finding out more about the book, please check out the review by Shim Marom and the one by Scott McCrickard.

There are also a number of customer reviews on Amazon.

The Heretic’s Guide is a self-published book with no big publisher marketing behind it, so we’d greatly appreciate your spreading the word!

On the unintended consequences of organisational change

Introduction

Change, as the cliché goes, is the only constant. At any given time, most organisations are either planning or implementing changes of some kind. Perhaps because of its ubiquity, the rationale and results of change are not questioned as deeply as they ought to be. In this post I describe some unintended effects of organisational change, drawing on Barbara Czarniawska’s book, A Theory of Organizing and other sources. I also briefly discuss some ways in which these side effects can be avoided.

I’ll begin with a few words about terminology. In this article planned changes (also referred to as reforms) are changes instituted in order to achieve specific goals. The goals of reforms are referred to as planned effects – that is, planned effects are intended results of change. As I discuss below, although planned effects may eventually be achieved, change initiatives have a host of unforeseen but significant consequences. These are referred to as unplanned, unintended or side effects.

This article is organised as follows: I’ll begin by describing some of the positive and negative side effects of change, following which I’ll discuss why side effects come about and how they can be managed.

Advantageous side effects of change

Although, the term side effect has a negative connotation, some side effects of change can actually be advantageous. These include:

- Questioning of the status quo: In most organisations, processes and structures are taken for granted, rarely is the status quo questioned. Organisational change presents an opportunity to pose those “How can we do this better?” type questions that challenge the way things are done. Such questioning is unplanned in that it generally occurs spontaneously.

- Opportunities for reflection: This is a consequence of the previous point: questioning the status quo can cause people to reflect on how things can be done better. Again, this is an unintended consequence of a reform, not part of its planned goals. Also, it should be noted that although opportunities for reflection arise often, they are generally ignored because of time pressures.

- Spontaneous inventions: Finally, questioning of and reflecting on the status quo can trigger ideas for improvement.

Most people would agree that the above points are indeed Good Things that ought to be encouraged. However, the important point is that people who are in the throes of a planned change seldom have the time or motivation to pursue these opportunities.

Harmful side effects of change

The negative side effects of planned changes are insidious because they tend to occur as a result of inaction – i.e. by not taking corrective actions to counter the detrimental effects of change. The following side effects serve to illustrate this point:

- The aims of reform become cast in stone: The objectives of a change initiative are formulated based on an understanding of a situation as it exists at a particular point in time. Problem is, as time evolves the original objectives maybecome irrelevant or obsolete. Yet, in many (most?) change initiatives, objectives are rarely reviewed and adjusted.

- The means get confused with the ends: Following from the previous point, a change initiative becomes pointless when its objectives are no longer relevant. However, a common reaction in such situations is to continue the initiative, justifying it as a worthwhile end in itself. For example, if the benefits of, say, a restructuring initiative become moot, the restructuring itself becomes the objective rather than the benefits that were supposed to flow from it. This helps save face as the project can be declared a success once the restructuring is completed, regardless of whether or not the promised benefits are realised.

- Improvisations and spontaneous inventions are suppressed: As I have discussed at length in this post, planning and improvisation are complementary but contradictory aspects of organizational work. A negative aspect of planned change initiatives is that they are inimical to improvisations: those responsible for overseeing the change tend to ignore, even suppress any improvisations that arise because they are seen as getting in the way of achieving the objectives of the primary change.

Planned change initiatives are generally implemented through programs or projects. In fact, most major projects in organisations – restructurings, enterprise system implementations etc – are aimed at implementing reforms of some kind. However, although the raison d’etre of such projects is to achieve the planned objectives, many suffer from the negative side effects mentioned above. In her book Czarniawska states, “Planned change rarely, if ever, leads to planned effects.” Although this claim may be a tad exaggerated, the significant proportion of large projects that fail suggests there is at least a whiff of truth about it.

In the next two sections I take a brief look at why planned changes fail and what can be done about it.

The origin of the side effects of change

Most structures and processes within organisations have a complex, path-dependent history. Among other things, they develop in ways that are unique to an organisation and are often deeply intertwined with each other. As a result, it is impossible to be certain about the consequences of changing processes or structures – there are just too many variables and dependencies involved.

There are two related points that flow from this:

Firstly, those who plan changes need to have a good understanding of legacy: the history of the issues that the change aims to fix and those that it may create in the future. The problem is most of the people involved in planning, initiating and executing reforms have little appreciation of such issues.

Secondly, most major changes are conceived by a small number of people who hold positions of authority within organisations. These folks have a tendency to gloss over complexities, and often fail to involve those who have a detailed knowledge of the affected processes and structures. Consequently, their plans overlook dependencies and possible knock-on effects that can arise from them. This results in the negative side effects discussed in the previous section.

..and what can be done about them

Czarniawska recommends the following informal rules for successful change:

- Be willing to modify the objectives of the change and your path to get there as your understanding of it evolves.

- Implement lightweight processes, avoid bureaucratic procedures.

- Be open to improvisations.

This is good advice as it goes, but how exactly does one use it?

In our recently published book, The Heretic’s Guide to Best Practices, Paul Culmsee and I discuss how issues of legacy and lack of inclusiveness can be addressed.

Firstly, we suggest that apart from time, cost and scope (the classic iron triangle), project decision-makers would be well served by considering legacy as a separate variable in projects (also see this post on Paul’s blog for more on this point). More importantly, we describe techniques that can be used to surface hidden assumptions and aspects of history that could have a bearing on the project and those that might cause problems in the future.

Secondly, we discuss how one can work towards creating an environment in which a diverse group of stakeholders can air and reconcile their viewpoints. Such a discussion is a prerequisite to creating a plan that: a) considers as many viewpoints (variables) as possible and b) has the support of all stakeholders. Without this, any implementation is bound to have side-effects because of overlooked variables and/or the actions (or non-actions) of stakeholders who do not support the plan.

Of course, inclusiveness sounds great but it can be difficult in practice, especially in large organisations. What can decision-makers do in such cases? The answer comes from a slightly different, if rather obvious direction.

In his very illuminating book on decision-making, James March notes that organisations face messy and inconsistent environments. Given this, decisions made and implemented at lower levels have a better chance of success than those made in rarefied air of board-rooms. Paraphrasing a statement from his book:

Since knowledge of local conditions and specialized competencies are both essential and more readily found in decentralized units, control over the details of policy implementation and adaptation of general policies to local conditions are [best] delegated to local units. From the standpoint of general management, the strategy is usually seen as one of gaining the informational and motivational advantages of using people with local involvement, [but] at the cost of accentuating problems of central coordination and control.

Indeed, most of the nasty side effects of planned change arise from over-centralisation of coordination and control. The solution is to devolve control and decision-making authority down to the level at which the changes are to be implemented.

Conclusion

Planned change fails to achieve its goals because planners cannot foresee all the consequences of change or even know which factors may be important in determining these. Moreover, individuals will view changes through the lens of their background, biases and interests. Since organisations consist of many individuals with different views, managing change is essentially a wicked problem.

To sum up, those who initiate large-scale changes should keep in mind the law of unintended consequences: any planned action will have consequences that are not intended, or even foreseen. These consequences can be managed by getting a better appreciation of the factors that affect the processes and the structures to be changed. One can gain an understanding of these factors through a consideration of legacy and/or via dialogue involving all those who work with the processes and structures that are to be changed. The simplest way to achieve both is by delegating decision making and implementation authority down to where it belongs – with the people who work at the coalface of the organisation.