Author Archive

Planned failure – a project management paradox

The other day a friend and I were talking about a failed process improvement initiative in his organisation. The project had blown its budget and exceeded the allocated time by over 50%, which in itself was a problem. However, what I found more interesting was that the failure was in a sense planned – that is, given the way the initiative was structured, failure was almost inevitable. Consider the following:

- Process owners had little or no input into the project plan. The “plan” was created by management and handed down to those at the coalface of processes. This made sense from management’s point of view – they had an audit deadline to meet. However, it alienated those involved from the outset.

- The focus was on time, cost and scope; history was ignored. Legacy matters – as Paul Culmsee mentions in a recent post, “To me, considering time, cost and scope without legacy is delusional and plain dumb. Legacy informs time, cost and scope and challenges us to look beyond the visible symptoms of what we perceive as the problem to what’s really going on.” This is an insightful observation – indeed, ignoring legacy is guaranteed to cause problems down the line.

The conversation with my friend got me thinking about planned failures in general. A feature that is common to many failed projects is that planning decisions are based on dubious assumptions. Consider, for example, the following (rather common) assumptions made in project work:

- Stakeholders have a shared understanding of project goals. That is, all those who matter are on the same page regarding the expected outcome of the project.

- Key personnel will be available when needed and – more importantly – will be able to dedicate 100% of their committed time to the project.

The first assumption may be moot because stakeholders view a project in terms of their priorities and these may not coincide with those of other stakeholder groups. Hence the mismatch of expectations between, say, development and marketing groups in product development companies. The second assumption is problematic because key project personnel are often assigned more work than they can actually do. Interestingly, this happens because of flawed organisational procedures rather than poor project planning or scheduling – see my post on the resource allocation syndrome for a detailed discussion of this issue.

Another factor that contributes to failure is that these and other such assumptions often come in to play during the early stages of a project. Decisions that are based on these assumptions thus affect all subsequent stages of the project. To make matters worse, their effects can be amplified as the project progresses. I have discussed these and other problems in my post on front-end decision making in projects.

What is relevant from the point of view of failure is that assumptions such as the ones above are rarely queried, which begs the question as to why they remain unchallenged. There are many reasons for this, some of the more common ones are:

- Groupthink: This is the tendency of members of a group to think alike because of peer pressure and insulation from external opinions. Project groups are prone to falling into this trap, particularly when they are under pressure. See this post for more on groupthink in project environments and ways to address it.

- Cognitive bias: This term refers to a wide variety of errors in perception or judgement that humans often make (see this Wikipedia article for a comprehensive list of cognitive biases). In contrast to groupthink, cognitive bias operates at the level of an individual. A common example of cognitive bias at work in projects is when people underestimate the effort involved in a project task through a combination of anchoring and/or over-optimism (see this post for a detailed discussion of these biases at work in a project situation). Further examples can be found in in my post on the role of cognitive biases in project failure, which discusses how many high profile project failures can be attributed to systematic errors in perception and judgement.

- Fear of challenging authority: Those who manage and work on projects are often reluctant to challenge assumptions made by those in positions of authority. As a result, they play along until the inevitable train wreck occurs.

So there is no paradox: planned failures occur for reasons that we know and understand. However, knowledge is one thing, acting on it quite another. The paradox will live on because in real life it is not so easy to bell the cat.

Inexplicit knowledge: what people know, but won’t tell

Introduction

Much of the knowledge that exists in organisations remains unarticulated, in the heads of those who work at the coalface of business activities. Knowledge management professionals know this well, and use the terms explicit and tacit knowledge to distinguish between knowledge that can and can’t be communicated via language. Incidentally, the term tacit knowledge was coined by Michael Polanyi – and it is important to note that he used it in a sense that is very different from what it has come to mean in knowledge management. However, that’s a topic for another post. In the present post I look at a related issue that is common in organisations: the fact that much of what people know can be made explicit, but isn’t. Since the discipline of knowledge management is in dire need of more jargon, I call this inexplicit knowledge. To borrow a phrase from Polanyi, inexplicit knowledge is what people know, but won’t tell. Below, I discuss reasons why potentially explicit knowledge remains inexplicit and what can be done about it.

Why inexplicit knowledge is common

Most people would have encountered work situations in which they chose “not to tell” – remaining silent instead of sharing knowledge that would have been helpful. Common reasons for such behaviour include:

- Fear of loss of ownership of the idea: People are attached to their ideas. One reason for not volunteering their ideas is the worry that someone else in the organisation (a peer or manager) might “steal” the idea. Sometimes such behaviour is institutionalised in the form of an “innovation committee” that solicits ideas, offering monetary incentives for those that are deemed the best (more on incentives below). Like most committee-based solutions, this one is a dud. A better option may be to put in place mechanisms to ensure that those who conceive and volunteer ideas are encouraged to see them through to fruition.

- Fear of loss of face and/or fear of reprisals: In organisational cultures that are competitive, people may fear that their ideas will be ridiculed or put down by others. Closely related to this is the fear of reprisals from management. This happens often enough, particularly when the idea challenges the status quo or those in positions of authority. One of the key responsibilities of management is to foster an environment in which people feel psychologically safe to volunteer ideas, however controversial or threatening the ideas may be.

- Lack of incentives: Some people may be willing to part with their ideas, but only at a price. To address this, organisations may offer extrinsic rewards (i.e. material items such as money, gift vouchers etc) for worthwhile ideas. Interestingly, research has shown that non-monetary extrinsic rewards (meals, gifts etc.) are more effective than monetary ones. This makes sense – financial rewards are more easily forgotten; people are more likely to remember a meal at a top-flight restaurant than a 500$ cheque. That said, it is important to note that extrinsic rewards can also lead to unintended side effects. For example, financial incentives based on quantity of contributions might lead to a glut of low-quality contributions. See the next point for a discussion of another side effect of extrinsic rewards.

- Wrong incentives: As I have discussed at length in my post on motivation in knowledge management projects, people will contribute their hard earned knowledge only if they are truly engaged in their work. Such people are intrinsically motivated (i.e. internally motivated, independent of material rewards); their satisfaction comes from their work (yes, such people do exist!). Consequently they need little or no supervision. Intrinsic rewards are invariably non-material and they cannot be controlled by management. A surprising fact is that, intrinsically motivated people can actually be turned off – even offended – by material rewards.

Psychological safety and incentives are important factors, but there is an even more important issue: the relationships between people who make up the workgroup.

Knowledge sharing and the theory of cooperative action

The work of Elinor Ostrom on collective (or cooperative) action is relevant here because knowledge sharing is a form of cooperation. According to the theory of cooperative action, there are three core relationships that promote cooperation in groups: trust, reciprocity and reputation. Below I take a look at each of these in the context of knowledge sharing:

Trust: In the end, whether we choose to share what we know is largely a matter of trust: if we believe that others will respond positively – be it through acknowledgement or encouragement via tangible or intangible rewards – then the chances are that we will tell what we know. On the other hand, if the response is likely to be negative, we may prefer to remain silent.

Reciprocity: This refers to strategies that are based on treating people in the way we believe they would treat us. We are more likely to share what we know with others if we have reason to believe that they would be just as open with us.

Reputation: This refers to the views we have about the individuals we work with. Although such views may be developed by direct observation of peoples’ behaviours, they are also greatly influenced by opinions of others. The relevance of reputation is that we are more likely to be open with people who have a good reputation.

According to Ostrom, these core relationships can be enhanced by face-to-face communication and organisational rules/ norms that promote openness. See my post on Ostrom’s work and its relevance to project management for more on this.

Summing up

One of the key challenges that organisations face is to get people working together in a cooperative manner. Among other things this includes getting people to share their knowledge; to “tell what they know.” Unfortunately, much of this potentially explicit knowledge remains inexplicit, locked away in peoples’ heads, because there is no incentive to share or, even worse, there are factors that actively discourage people from sharing what they know. These issues can be tackled by offering employees the right incentives and creating the right environment. As important as incentives are, the latter is the more important factor: the key to unlocking inexplicit knowledge lies in creating an environment of trust and openness.

On the meaning and interpretation of project documents

Introduction

Most projects generate reams of paperwork ranging from business cases to lessons learned documents. These are usually written with a specific audience in mind: business cases are intended for executive management whereas lessons learned docs are addressed to future project staff (or the portfolio police…). In view of this, such documents are intended to convey a specific message: a business case aims to convince management that a project has strategic value while a lessons learnt document offers future project teams experience-based advice.

Since the writer of a project document has a clear objective in mind, it is natural to expect that the result would be largely unambiguous. In this post, I look at the potential gap between the meaning of a project document (as intended by the author) and its interpretation (by a reader). As we will see, it is far from clear that the two are the same – in fact, most often, they are not. Note that the points I make apply to any kind of written or spoken communication, not just project documents. However, in keeping with the general theme of this blog, my discussion will focus on the latter.

Meaning and truth

Let’s begin with an example. Consider the following statement taken from this sample business case:

“ABC Company has an opportunity to save 260 hours of office labor annually by automating time-consuming and error-prone manual tasks.”

Let’s ask ourselves: what is the meaning of this sentence?

On the face of it, the meaning of a sentence such as the one above is equivalent to knowing the condition(s) under which the claim it makes is true. For example, the statement above implies that if the company undertakes the project (condition) then it will save the stated hours of labour (claim). This interpretation of meaning is called the truth-conditional model. Among other things, it assumes that the truth of a sentence has an objective meaning.

Most people have something like the truth-conditional model in mind when they are writing documents: they (try to) write in a way that makes the truth of their claims plausible or, better yet, evident.

Buehler’s model of language

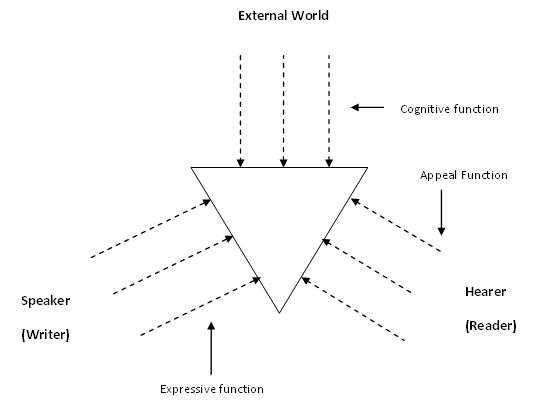

At this point, it is helpful to look at a model of language proposed by the German linguist Karl Buehler in the 1930s. According to Buehler, language has three functions, not just one as in the truth-conditional model. The three functions are:

- Cognitive: representing an (objective) truth about the world. This is the same “truth” as in the truth-conditional model.

- Expressive: expressing a point of view of the writer (or speaker).

- Appeal: making a request of the reader – or “appealing to” the reader.

A graphical representation of the model –sometimes called the organon model – is shown in Figure 1 below.

The basic point Buehler makes is that focusing on the cognitive function alone cannot lead to a complete picture of meaning. One has to factor in the desires and intent of the writer (or speaker) and the predispositions of those who make up the audience. Ultimately, the meaning resides not in some idealized objective truth, but in how readers interpret the document.

Meaning and interpretation

Let’s look at the statement made in the previous section in the light of Buehler’s model.

First, the statement (and indeed the document) makes some claims regarding the external, objective world. This is essentially the same as the truth-conditional view mentioned in the previous section.

Second, from the viewpoint of the expressive function, the statement (and the entire business case, for that matter) selects facts that the writer believes will convince the reader. So, among other things, the writer claims that the company will save 260 hours of manual labour by automating time-consuming and error-prone tasks. The adjectives used imply that some tasks are not carried out efficiently. The author chose to make this point; he or she could have made it another way or even not made it all.

Finally, executives who read the business case might interpret claim made in many different ways depending on:

- Their knowledge of the office environment (things such as the workload of office staff, scope for automation etc.) and the environment. This corresponds to the cognitive function in Buehler’s model.

- Their own predispositions, intentions and desires and those that they impute to the author. This corresponds to the appeal and expressive functions.

For instance, the statement might be viewed as irrelevant by an executive who believes that the existing office staff are perfectly capable of dealing with the workload (“They need to work smarter”, he might say). On the other hand, if he knows that the business case has been written up by the IT department (who are currently looking to justify their budgets), he might well question the validity of the statement and ask for details of how the figure of 260 hours was arrived at. The point is: even a simple and seemingly unambiguous statement (from the point of view of the writer) might be interpreted in a host of unexpected ways.

More than just “sending and receiving”

The standard sender-receiver model of communication is simplistic. Among other things it assumes that interpretation is “just” a matter of interpreting a message correctly. The general assumption is that:

…If the requisite information has been properly packed in a message, only someone who is deficient could fail to get it out. This partitioning of responsibility between the sender and the recipient often results in reciprocal blaming for communication. (Quoted from Questions and Information: contrasting metaphors by Thomas Lauer)

Buehler’s model reminds us that any communication – as clear as it may seem to the sender – is open to being interpreted in a variety of different ways by the receiver. Moreover, the two parties need to understand each others intent and motives, which are generally not open to view.

Wrapping up

The meaning of project documents isn’t as clear-cut as is usually assumed. This is so even for documents that are thought of as being unambiguous (such as contracts or status reports). Writers write from their point of view, which may differ considerably from that of their readers. Further, phrases and sentences which seem clear to a writer can be interpreted in a variety of ways by readers, depending on their situation and motivations. The bottom line is that the writer must not only strive for clarity of expression, but must also try to anticipate ways in which readers might interpret what’s written.