Archive for the ‘portfolio management’ Category

Planned failure – a project management paradox

The other day a friend and I were talking about a failed process improvement initiative in his organisation. The project had blown its budget and exceeded the allocated time by over 50%, which in itself was a problem. However, what I found more interesting was that the failure was in a sense planned – that is, given the way the initiative was structured, failure was almost inevitable. Consider the following:

- Process owners had little or no input into the project plan. The “plan” was created by management and handed down to those at the coalface of processes. This made sense from management’s point of view – they had an audit deadline to meet. However, it alienated those involved from the outset.

- The focus was on time, cost and scope; history was ignored. Legacy matters – as Paul Culmsee mentions in a recent post, “To me, considering time, cost and scope without legacy is delusional and plain dumb. Legacy informs time, cost and scope and challenges us to look beyond the visible symptoms of what we perceive as the problem to what’s really going on.” This is an insightful observation – indeed, ignoring legacy is guaranteed to cause problems down the line.

The conversation with my friend got me thinking about planned failures in general. A feature that is common to many failed projects is that planning decisions are based on dubious assumptions. Consider, for example, the following (rather common) assumptions made in project work:

- Stakeholders have a shared understanding of project goals. That is, all those who matter are on the same page regarding the expected outcome of the project.

- Key personnel will be available when needed and – more importantly – will be able to dedicate 100% of their committed time to the project.

The first assumption may be moot because stakeholders view a project in terms of their priorities and these may not coincide with those of other stakeholder groups. Hence the mismatch of expectations between, say, development and marketing groups in product development companies. The second assumption is problematic because key project personnel are often assigned more work than they can actually do. Interestingly, this happens because of flawed organisational procedures rather than poor project planning or scheduling – see my post on the resource allocation syndrome for a detailed discussion of this issue.

Another factor that contributes to failure is that these and other such assumptions often come in to play during the early stages of a project. Decisions that are based on these assumptions thus affect all subsequent stages of the project. To make matters worse, their effects can be amplified as the project progresses. I have discussed these and other problems in my post on front-end decision making in projects.

What is relevant from the point of view of failure is that assumptions such as the ones above are rarely queried, which begs the question as to why they remain unchallenged. There are many reasons for this, some of the more common ones are:

- Groupthink: This is the tendency of members of a group to think alike because of peer pressure and insulation from external opinions. Project groups are prone to falling into this trap, particularly when they are under pressure. See this post for more on groupthink in project environments and ways to address it.

- Cognitive bias: This term refers to a wide variety of errors in perception or judgement that humans often make (see this Wikipedia article for a comprehensive list of cognitive biases). In contrast to groupthink, cognitive bias operates at the level of an individual. A common example of cognitive bias at work in projects is when people underestimate the effort involved in a project task through a combination of anchoring and/or over-optimism (see this post for a detailed discussion of these biases at work in a project situation). Further examples can be found in in my post on the role of cognitive biases in project failure, which discusses how many high profile project failures can be attributed to systematic errors in perception and judgement.

- Fear of challenging authority: Those who manage and work on projects are often reluctant to challenge assumptions made by those in positions of authority. As a result, they play along until the inevitable train wreck occurs.

So there is no paradox: planned failures occur for reasons that we know and understand. However, knowledge is one thing, acting on it quite another. The paradox will live on because in real life it is not so easy to bell the cat.

Six common pitfalls in project risk analysis

The discussion of risk in presented in most textbooks and project management courses follows the well-trodden path of risk identification, analysis, response planning and monitoring (see the PMBOK guide, for example). All good stuff, no doubt. However, much of the guidance offered is at a very high level. Among other things, there is little practical advice on what not to do. In this post I address this issue by outlining some of the common pitfalls in project risk analysis.

1. Reliance on subjective judgement: People see things differently: one person’s risk may even be another person’s opportunity. For example, using a new technology in a project can be seen as a risk (when focusing on the increased chance of failure) or opportunity (when focusing on the opportunities afforded by being an early adopter). This is a somewhat extreme example, but the fact remains that individual perceptions influence the way risks are evaluated. Another problem with subjective judgement is that it is subject to cognitive biases – errors in perception. Many high profile project failures can be attributed to such biases: see my post on cognitive bias and project failure for more on this. Given these points, potential risks should be discussed from different perspectives with the aim of reaching a common understanding of what they are and how they should be dealt with.

2. Using inappropriate historical data: Purveyors of risk analysis tools and methodologies exhort project managers to determine probabilities using relevant historical data. The word relevant is important: it emphasises that the data used to calculate probabilities (or distributions) should be from situations that are similar to the one at hand. Consider, for example, the probability of a particular risk – say, that a particular developer will not be able to deliver a module by a specified date. One might have historical data for the developer, but the question remains as to which data points should be used. Clearly, only those data points that are from projects that are similar to the one at hand should be used. But how is similarity defined? Although this is not an easy question to answer, it is critical as far as the relevance of the estimate is concerned. See my post on the reference class problem for more on this point.

3. Focusing on numerical measures exclusively: There is a widespread perception that quantitative measures of risk are better than qualitative ones. However, even where reliable and relevant data is available, the measures still need to based on sound methodologies. Unfortunately, ad-hoc techniques abound in risk analysis: see my posts on Cox’s risk matrix theorem and limitations of risk scoring methods for more on these. Risk metrics based on such techniques can be misleading. As Glen Alleman points out in this comment, in many situations qualitative measures may be more appropriate and accurate than quantitative ones.

4. Ignoring known risks: It is surprising how often known risks are ignored. The reasons for this have to do with politics and mismanagement. I won’t dwell on this as I have dealt with it at length in an earlier post.

5. Overlooking the fact that risks are distributions, not point values: Risks are inherently uncertain, and any uncertain quantity is represented by a range of values, (each with an associated probability) rather than a single number (see this post for more on this point). Because of the scarcity or unreliability of historical data, distributions are often assumed a priori: that is, analysts will assume that the risk distribution has a particular form (say, normal or lognormal) and then evaluate distribution parameters using historical data. Further, analysts often choose simple distributions that that are easy to work with mathematically. These distributions often do not reflect reality. For example, they may be vulnerable to “black swan” occurences because they do not account for outliers.

6. Failing to update risks in real time: Risks are rarely static – they evolve in time, influenced by circumstances and events both in and outside the project. For example, the acquisition of a key vendor by a mega-corporation is likely to affect the delivery of that module you are waiting on –and quite likely in an adverse way. Such a change in risk is obvious; there may be many that aren’t. Consequently, project managers need to reevaluate and update risks periodically. To be fair, this is a point that most textbooks make – but it is advice that is not followed as often as it should be.

This brings me to the end of my (subjective) list of risk analysis pitfalls. Regular readers of this blog will have noticed that some of the points made in this post are similar to the ones I made in my post on estimation errors. This is no surprise: risk analysis and project estimation are activities that deal with an uncertain future, so it is to be expected that they have common problems and pitfalls. One could generalize this point: any activity that involves gazing into a murky crystal ball will be plagued by similar problems.

On the meaning and interpretation of project documents

Introduction

Most projects generate reams of paperwork ranging from business cases to lessons learned documents. These are usually written with a specific audience in mind: business cases are intended for executive management whereas lessons learned docs are addressed to future project staff (or the portfolio police…). In view of this, such documents are intended to convey a specific message: a business case aims to convince management that a project has strategic value while a lessons learnt document offers future project teams experience-based advice.

Since the writer of a project document has a clear objective in mind, it is natural to expect that the result would be largely unambiguous. In this post, I look at the potential gap between the meaning of a project document (as intended by the author) and its interpretation (by a reader). As we will see, it is far from clear that the two are the same – in fact, most often, they are not. Note that the points I make apply to any kind of written or spoken communication, not just project documents. However, in keeping with the general theme of this blog, my discussion will focus on the latter.

Meaning and truth

Let’s begin with an example. Consider the following statement taken from this sample business case:

“ABC Company has an opportunity to save 260 hours of office labor annually by automating time-consuming and error-prone manual tasks.”

Let’s ask ourselves: what is the meaning of this sentence?

On the face of it, the meaning of a sentence such as the one above is equivalent to knowing the condition(s) under which the claim it makes is true. For example, the statement above implies that if the company undertakes the project (condition) then it will save the stated hours of labour (claim). This interpretation of meaning is called the truth-conditional model. Among other things, it assumes that the truth of a sentence has an objective meaning.

Most people have something like the truth-conditional model in mind when they are writing documents: they (try to) write in a way that makes the truth of their claims plausible or, better yet, evident.

Buehler’s model of language

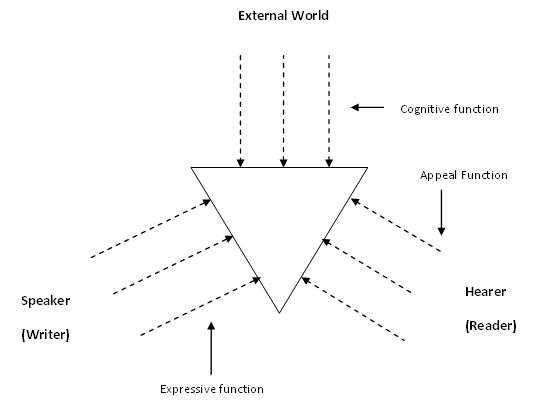

At this point, it is helpful to look at a model of language proposed by the German linguist Karl Buehler in the 1930s. According to Buehler, language has three functions, not just one as in the truth-conditional model. The three functions are:

- Cognitive: representing an (objective) truth about the world. This is the same “truth” as in the truth-conditional model.

- Expressive: expressing a point of view of the writer (or speaker).

- Appeal: making a request of the reader – or “appealing to” the reader.

A graphical representation of the model –sometimes called the organon model – is shown in Figure 1 below.

The basic point Buehler makes is that focusing on the cognitive function alone cannot lead to a complete picture of meaning. One has to factor in the desires and intent of the writer (or speaker) and the predispositions of those who make up the audience. Ultimately, the meaning resides not in some idealized objective truth, but in how readers interpret the document.

Meaning and interpretation

Let’s look at the statement made in the previous section in the light of Buehler’s model.

First, the statement (and indeed the document) makes some claims regarding the external, objective world. This is essentially the same as the truth-conditional view mentioned in the previous section.

Second, from the viewpoint of the expressive function, the statement (and the entire business case, for that matter) selects facts that the writer believes will convince the reader. So, among other things, the writer claims that the company will save 260 hours of manual labour by automating time-consuming and error-prone tasks. The adjectives used imply that some tasks are not carried out efficiently. The author chose to make this point; he or she could have made it another way or even not made it all.

Finally, executives who read the business case might interpret claim made in many different ways depending on:

- Their knowledge of the office environment (things such as the workload of office staff, scope for automation etc.) and the environment. This corresponds to the cognitive function in Buehler’s model.

- Their own predispositions, intentions and desires and those that they impute to the author. This corresponds to the appeal and expressive functions.

For instance, the statement might be viewed as irrelevant by an executive who believes that the existing office staff are perfectly capable of dealing with the workload (“They need to work smarter”, he might say). On the other hand, if he knows that the business case has been written up by the IT department (who are currently looking to justify their budgets), he might well question the validity of the statement and ask for details of how the figure of 260 hours was arrived at. The point is: even a simple and seemingly unambiguous statement (from the point of view of the writer) might be interpreted in a host of unexpected ways.

More than just “sending and receiving”

The standard sender-receiver model of communication is simplistic. Among other things it assumes that interpretation is “just” a matter of interpreting a message correctly. The general assumption is that:

…If the requisite information has been properly packed in a message, only someone who is deficient could fail to get it out. This partitioning of responsibility between the sender and the recipient often results in reciprocal blaming for communication. (Quoted from Questions and Information: contrasting metaphors by Thomas Lauer)

Buehler’s model reminds us that any communication – as clear as it may seem to the sender – is open to being interpreted in a variety of different ways by the receiver. Moreover, the two parties need to understand each others intent and motives, which are generally not open to view.

Wrapping up

The meaning of project documents isn’t as clear-cut as is usually assumed. This is so even for documents that are thought of as being unambiguous (such as contracts or status reports). Writers write from their point of view, which may differ considerably from that of their readers. Further, phrases and sentences which seem clear to a writer can be interpreted in a variety of ways by readers, depending on their situation and motivations. The bottom line is that the writer must not only strive for clarity of expression, but must also try to anticipate ways in which readers might interpret what’s written.