Archive for the ‘Project Management’ Category

The case of the missed requirement

It would have been a couple of weeks after the kit tracking system was released that Therese called Mike to report the problem.

“How’re you going, Mike?” She asked, and without waiting to hear his reply, continued, “I’m at a site doing kit allocations and I can’t find the screen that will let me allocate sub-kits.”

“What’s a sub-kit?” Mike was flummoxed; it was the first time he’d heard the term. It hadn’t come up during any of the analysis sessions, tests, or any of the countless conversations he’d had with end-users during development.

“Well, we occasionally have to break open kits and allocate different parts of it to different sites,” said Therese. “When this happens, we need to keep track of which site has which part.”

“Sorry Therese, but this never came up during any of the requirements sessions, so there is no screen.”

“What do I do? I have to record this somehow.” She was upset, and understandably so.

“Look,” said Mike, “could you make a note of the sub-kit allocations on paper – or better yet, in Excel?

“Yeah, I could do that if I have to.”

“Great. Just be sure to record all the kit identifier and which part of the kit is allocated to which site. We’ll have a chat about the sub-kit allocation process when you are back from your site visit. Once I understand the process, I should be able to have it programmed in a couple of days. When will you be back?”

“Tomorrow,” said Therese.

“OK, I’ll book something for tomorrow afternoon.”

The conversation concluded with the usual pleasantries.

After Mike hung up he wondered how they could have missed such an evidently important requirement. The application had been developed in close consultation with users. The requirements sessions had involved more than half the user community. How had they forgotten to mention such an important requirement and, more important, how had he and the other analyst not asked the question, “Are kits ever divided up between sites?”

Mike and Therese had their chat the next day. As it turned out, Mike’s off-the-cuff estimate was off by a long way. It took him over a week to add in the sub-kit functionality, and another day or so to import all the data that users had entered in Excel (and paper!) whilst the screens were being built.

The missing requirement turned out to be a pretty expensive omission.

—-

The story of Therese and Mike may ring true with those who are involved with software development. Gathering requirements is an error prone process: users forget to mention things, and analysts don’t always ask the right questions. This is one reason why iterative development is superior to BDUF approaches: the former offers many more opportunities for interaction between users and analysts, and hence many more opportunities to catch those elusive requirements.

Yet, although Mike had used a joint development approach, with plenty of interaction between users and developers, this important requirement had been overlooked.

Further, as Mike’s experience corroborates, fixing issues associated with missing requirements can be expensive.

Why is this so? To offer an answer, I can do no better than to quote from Robert Glass’ book, Facts and Fallacies of Software Engineering.

Fact 25 in the book goes: Missing requirements are the hardest requirements errors to correct.

In his discussion of the above, Glass has this to say:

Why are missing requirements so devastating to problem solution? Because each requirement contributes to the level of difficulty of solving a problem, and the interaction among all those requirements quickly escalates the complexity of the problem’s solution. The omission of one requirement may balloon into failing to consider a whole host of problems in designing a solution.

Of course, by definition, missing requirements are hard to test for. Glass continues:

Why are missing requirements hard to detect and correct? Because the most basic portion of the error removal process in software is requirements-driven. We define test cases to verify that each requirement in the problem solution has been satisfied. If a requirement is not present, it will not appear in the specification and, therefore, will not be checked during any of the specification-driven reviews or inspections; further there will be no test cases built to verify its satisfaction. Thus the most basic error removal approaches will fail to detect its absence.

As a corollary to the above fact, Glass states that:

The most persistent software errors – those that escape the testing process and persist into the production version of the software – are errors of omitted logic. Missing requirements result in omitted logic.

In his research, Glass found that 30% of persistent errors were errors of omitted logic! It is pretty clear why these errors persist – because it is difficult to test for something that isn’t there. In the story above, the error would have remained undetected until someone needed to allocate sub-kits – something not done very often. This is probably why Therese and other users forgot to mention it. Why the analysts didn’t ask is another question: it is their job to ask questions that will catch such elusive requirements. And before Mike reads this and cries foul, I should admit that I was the other analyst on the project, and I have absolutely no defence to offer.

On the limitations of scoring methods for risk analysis

Introduction

A couple of months ago I wrote an article highlighting some of the pitfalls of using risk matrices. Risk matrices are an example of scoring methods , techniques which use ordinal scales to assess risks. In these methods, risks are ranked by some predefined criteria such as impact or expected loss, and the ranking is then used as the basis for decisions on how the risks should be addressed. Scoring methods are popular because they are easy to use. However, as Douglas Hubbard points out in his critique of current risk management practices, many commonly used scoring techniques are flawed. This post – based on Hubbard’s critique and research papers quoted therein – is a brief look at some of the flaws of risk scoring techniques.

Commonly used risk scoring techniques and problems associated with them

Scoring techniques fall under two major categories:

- Weighted scores: These use several ordered scales which are weighted according to perceived importance. For example: one might be asked to rate financial risk, technical risk and organisational risk on a scale of 1 to 5 for each, and then weight then by factors of 0.6, 0.3 and 0.1 respectively (possibly because the CFO – who happens to be the project sponsor – is more concerned about financial risk than any other risks ). The point is, the scores and weights assigned can be highly subjective – more on that below.

- Risk matrices: These rank risks along two dimensions – probability and impact – and assign them a qualitative ranking of high, medium or low depending on where they fall. Cox’s theorem shows such categorisations are internally inconsistent because the category boundaries are arbitrarily chosen.

Hubbard makes the point that, although both the above methods are endorsed by many standards and methodologies (including those used in project management), they should be used with caution because they are flawed. To quote from his book:

Together these ordinal/scoring methods are the benchmark for the analysis of risks and/or decisions in at least some component of most large organizations. Thousands of people have been certified in methods based in part on computing risk scores like this. The major management consulting firms have influenced virtually all of these standards. Since what these standards all have in common is the used of various scoring schemes instead of actual quantitative risk analysis methods, I will call them collectively the “scoring methods.” And all of them, without exception, are borderline or worthless. In practices, they may make many decisions far worse than they would have been using merely unaided judgements.

What is the basis for this claim? Hubbard points to the following:

- Scoring methods do not make any allowance for flawed perceptions of analysts who assign scores – i.e. they do not consider the effect of cognitive bias. I won’t dwell on this as I have previously written about the effect of cognitive biases in project risk management -see this post and this one, for example.

- Qualitative descriptions assigned to each score are understood differently by different people. Further, there is rarely any objective guidance as to how an analyst is to distinguish between a high or medium risk. Such advice may not even help: research by Budescu, Broomell and Po shows that there can be huge variances in understanding of qualitative descriptions, even when people are given specific guidelines what the descriptions or terms mean.

- Scoring methods add their own errors. Below are brief descriptions of some of these:

- In his paper on the risk matrix theorem, Cox mentions that “Typical risk matrices can correctly and unambiguously compare only a small fraction (e.g., less than 10%) of randomly selected pairs of hazards. They can assign identical ratings to quantitatively very different risks.” He calls this behaviour “range compression” – and it applies to any scoring technique that uses ranges.

- Assigned scores tend to cluster around the mid-low high range. Analysis by Hubbard shows that, on a 5 point scale, 75% of all responses are 3 or 4. This implies that changing a score from 3 to 4 or vice-versa can have a disproportionate effect on classification of risks.

- Scores implicitly assume that the magnitude of the quantity being assumed is directly proportional to the scale. For example, a score of 2 implies that the criterion being measured is twice as large as it would be for a score of 1. However, in reality, criteria are rarely linear as implied by such a scale.

- Scoring techniques often presume that the factors being scored are independent of each other – i.e. there are no correlations between factors. This assumption is rarely tested or justified in any way.

Many project management standards advocate the use of scoring techniques. To be fair, in many situations they are adequate as long as they are used with an understanding of their limitations. Seen in this light, Hubbard’s book is an admonition to standards and textbook writers to be more critical of the methods they advocate, and a warning to practitioners that an uncritical adherence to standards and best practices is not the best way to manage project risks .

Scoring done right

Just to be clear, Hubbard’s criticism is directed against scoring methods that use arbitrary, qualitative scales which are not justified by independent analysis. There are other techniques which, though superficially similar to these flawed scoring methods, are actually quite robust because they are:

- Based on observations.

- Use real measures (as opposed to arbitrary ones – such as “alignment with business objectives” on a scale of 1 to 5, without defining what “alignment” means.)

- Validated after the fact (and hence refined with use).

As an example of a sound scoring technique, Hubbard quotes this paper by Dawes, which presents evidence that linear scoring models are superior to intuition in clinical judgements. Strangely, although the weights themselves can be obtained through intuition, the scoring model outperforms clinical intuition. This happens because human intuition is good at identifying important factors, but not so hot at evaluating the net effect of several, possibly competing factors. Hence simple linear scoring models can outperform intuition. The key here is that the models are validated by checking the predictions against reality.

Another class of techniques use axioms based on logic to reduce inconsistencies in decisions. An example of such a technique is multi-attribute utility theory. Since they are based on logic, these methods can also be considered to have a solid foundation unlike those discussed in the previous section.

Conclusions

Many commonly used scoring methods in risk analysis are based on flaky theoretical foundations – or worse, none at all. To compound the problem, they are often used without any validation. A particularly ubiquitous example is the well-known and loved risk matrix. In his paper on risk matrices, Tony Cox shows how risk matrices can sometimes lead to decisions that are worse than those made on the basis of a coin toss. The fact that this is a possibility – even if only a small one – should worry anyone who uses risk matrices (or other flawed scoring techniques) without an understanding of their limitations.

Building project knowledge – a social constructivist view

Introduction

Conventional approaches to knowledge management on projects focus on the cognitive (or thought-related) and mechanical aspects of knowledge creation and capture. There is alternate view, one which considers knowledge as being created through interactions between people who – through their interactions – develop mutually acceptable interpretations of theories and facts in ways that suit their particular needs. That is, project knowledge is socially constructed. If this is true, then project managers need to pay attention to the environmental and social factors that influence knowledge construction. This is the position taken by Paul Jackson and Jane Klobas in their paper entitled, Building knowledge in projects: A practical application of social constructivism to information systems development, which presents a knowledge creation / sharing process model based social constructivist theory. This article is a summary and review of the paper.

A social constructivist view of knowledge

Jackson and Klobas begin with the observation that engineering disciplines are founded on the belief that knowledge can be expressed in propositions that correspond to a reality which is independent of human perception. However, there is an alternate view that knowledge is not absolute, but relative – i.e. it depends on the mental models and beliefs used to interpret facts, objects and events. A relevant example is how a software product is viewed by business users and software developers. The former group may see an application in terms of its utility whereas the latter may see it as an instance of a particular technology. Such perception gaps can also occur within seemingly homogenous groups – such as teams comprised of software developers, for example. This can happen for a variety of reasons such as the differences in the experience and cultural backgrounds of those who make up the group. Social constructivism looks at how such gaps can be bridged.

The authors’ discussion relies on the work of Berger and Luckmann, who described how the gap between perceptions of different individuals can be overcome to create a socially constructed, shared reality. The phrase “socially constructed” implies that reality (as it pertains to a project, for example) is created via a common understanding of issues, followed by mutual agreement between all the players as to what comprises that reality. For me this view strikes a particular chord because of it is akin to the stated aims of dialogue mapping, a technique that I have described in several earlier posts (see this article for an example relevant to projects).

Knowledge in information systems development as a social construct

First up, the authors make the point that information systems development (ISD) projects are:

…intensive exercises in constructing social reality through process and data modeling. These models are informed with the particular world-view of systems designers and their use of particular formal representations. In ISD projects, this operational reality is new and explicitly constructed and becomes understood and accepted through negotiated agreement between participants from the two cultures of business and IT

Essentially, knowledge emerges through interaction and discussion as the project proceeds. However, the methodologies used in design are typically founded on an engineering approach, which takes a positivist view rather than a social one. As the authors suggest,

Perhaps the social constructivist paradigm offers an insight into continuing failure, namely that what is happening in an ISD project is far more complex than the simple translation of a description of an external reality into instructions for a computer. It is the emergence and articulation of multiple, indeterminate, sometimes unconscious, sometimes ineffable realities and the negotiated achievement of a consensus of a new, agreed reality in an explicit form, such as a business or data model, which is amenable to computerization.

With this in mind, the authors aim to develop a model that addresses the shortcomings of the traditional, positivist view of knowledge in ISD projects. They do this by representing Berger and Luckmann’s theory of social constructivism in terms of a knowledge process model. They then identify management principles that map on to these processes. These principles form the basis of a survey which is used as an operational version of the process model. The operational model is then assessed by experts and tested by a project manager in a real-life project.

The knowledge creation/sharing process model

The process model that Jackson and Klobas describe is based on Berger and Luckmann’s work.

The model describes how personal knowledge is created – personal knowledge being what an individual knows. Personal knowledge is built up using mental models of the world – these models are frameworks that individuals use to make sense of the world.

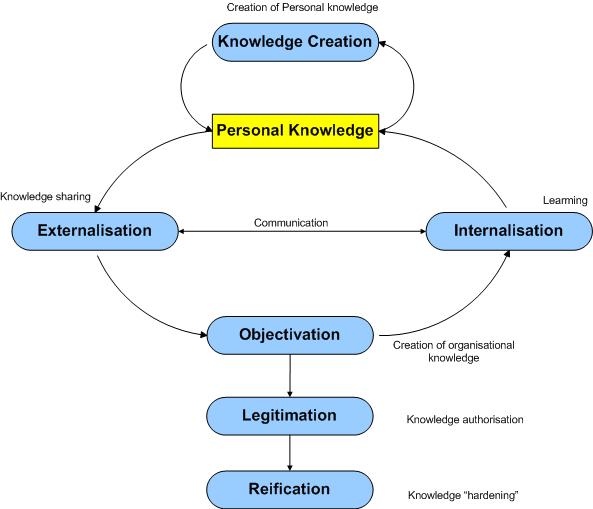

According to the Jackson-Klobas process model, personal knowledge is built up through a number of process including:

Internalisation: The absorption of knowledge by an individual

Knowledge creation: The construction of new knowledge through repetitive performance of tasks (learning skills) or becoming aware of new ideas, ways of thinking or frameworks. The latter corresponds to learning concepts and theories, or even new ways of perceiving the world. These correspond to a change in subjective reality for the individual.

Externalisation: The representation and description of knowledge using speech or symbols so that it can be perceived and internalized by others. Think of this as explaining ideas or procedures to other individuals.

Objectivation: The creation of a shared constructs that represent a group’s understanding of the world. At this point, knowledge is objectified – and is perceived as having an existence independent of individuals.

Legitimation: The authorization of objectified knowledge as being “correct” or “standard.”

Reification: The process by which objective knowledge assumes a status that makes it difficult to change or challenge. A familiar example of reified knowledge is any procedure or process that is “hardened” into a system – “That’s just the way things are done around here,” is a common response when such processes are challenged.

The links depicted in the figure show the relationships between these processes.

Jackson and Klobas suggest that knowledge creation in ISD projects is a social process, which occurs through continual communication between the business and IT. Sure, there are other elements of knowledge creation – design, prototyping, development, learning new skills etc. – but these amount to nought unless they are discussed, argued, agreed on and communicated through social interactions. These interactions occur in the wider context of the organization, so it is reasonable to claim that the resulting knowledge takes on a form that mirrors the social environment of the organization.

Clearly, this model of knowledge creation is very different from the usual interpretation of knowledge having an independent reality, regardless of whether it is known to the group or not.

An operational model

The above is good theory, which makes for interesting, but academic, discussions. What about practice? Can the model be operationalised? Jackson and Klobas describe an approach to creating to testing the utility (rather than the validity) of the model. I discuss this in the following sections.

Knowledge sharing heuristics

To begin with, they surveyed the literature on knowledge management to identify knowledge sharing heuristics (i.e. experience-based techniques to enable knowledge sharing). As an example, some of the heuristics associated with the externalization process were:

- We have standard documentation and modelling tools which make business requirements easy to understand

- Stakeholders and IS staff communicate regularly through direct face-to-face contact

- We use prototypes

The authors identified more than 130 heuristics. Each of these was matched with a process in the model. According to the authors, this matching process was simple: in most cases there was no doubt as to which process a heuristic should be attached to. This suggests that the model provides a natural way to organize the voluminous and complex body of research in knowledge creation and sharing. Why is this important? Well, because it suggests that the conceptual model (as illustrated in Fig. 1) can form the basis for a simple means to assess knowledge creation / sharing capabilities in their work environments, with the assurance that they have all relevant variables covered.

Validating the mapping

The validity of the matching was checked using twenty historical case studies of ISD projects. This worked as follows: explanations for what worked well and what didn’t were mapped against the model process areas (using the heuristics identified in the prior step). The aim was to answer the question: “is there a relationship between project failure and problems in the respective knowledge processes or, conversely, between project success and the presence of positive indicators?”

One of the case studies the authors use is the well-known (and possibly over-analysed) failure of the automated dispatch system for the London Ambulance Service. The paper has a succinct summary of the case study, which I reproduce below:

The London Ambulance Service (LAS) is the largest ambulance service in the world and provides accident and emergency and patient transport services to a resident population of nearly seven million people. Their ISD project was intended to produce an automated system for the dispatch of ambulances to emergencies. The existing manual system was poor, cumbersome, inefficient and relatively unreliable. The goal of the new system was to provide an efficient command and control process to overcome these deficiencies. Furthermore, the system was seen by management as an opportunity to resolve perceived issues in poor industrial relations, outmoded work practices and low resource utilization. A tender was let for development of system components including computer aided dispatch, automatic vehicle location, radio interfacing and mobile data terminals to update the status of any call-out. The tender was let to a company inexperienced in large systems delivery. Whilst the project had profound implications for work practices, personnel were hardly involved in the design of the system. Upon implementation, there were many errors in the software and infrastructure, which led to critical operational shortcomings such as the failure of calls to reach ambulances. The system lasted only a week before it was necessary to revert to the manual system.

Jackson and Klobas show how their conceptual model maps to knowledge-related factors that may have played a role in the failure project. For example, under the heading of personal knowledge, one can identify at least two potential factors: lack of involvement of end-users in design and selection of an inexperienced vendor. Further, the disconnect between management and employees suggests a couple of factors relating to reification: mutual negative perceptions and outmoded (but unchallenged) work practices.

From their validation, the authors suggest that the model provides a comprehensive framework that explains why these projects failed. That may be overstating the case – what’s cause and what’s effect is hard to tell, especially after the fact. Nonetheless, the model does seem to be able to capture many, if not all, knowledge-related gaps that could have played a role in these failures. Further, by looking at the heuristics mapped to each process, one might be able to suggest ways in which these deficiencies could have been addressed. For example, if externalization is a problem area one might suggest the use of prototypes or encourage face to face communication between IS and business personnel.

Survey-based tool

Encouraged by the above, the authors created a survey tool which was intended to evaluate knowledge creation/sharing effectiveness in project environments. In the tool, academic terms used in the model were translated into everyday language (for example, the term externalization was translated to knowledge sharing – see Fig 1 for translated terms). The tool asked project managers to evaluate their project environments against each knowledge creation process (or capability) on a scale of 1 to 10. Based on inputs, it could recommend specific improvement strategies for capabilities that were scored low. The tool was evaluated by four project managers, who used it in their work environment over a period of 4-6 weeks. At the end of the period, they were interviewed and their responses were analysed using content analysis to match their experiences and requirements against the designed intent of the tool. Unfortunately, the paper does not provide any details about the tool, so it’s difficult to say much more than paraphrase the authors comments.

Based on their evaluation, the authors conclude that the tool provides:

- A common framework for project managers to discuss issues pertaining to knowledge creation and sharing.

- A means to identify potential problems and what might be done to address them.

Field testing

One of the evaluators of the model tested the tool in the field. The tester was a project manager who wanted to identify knowledge creation/sharing deficiencies in his work environment, and ways in which these could be addressed. He answered questions based on his own evaluation of knowledge sharing capabilities in his environment and then developed an improvement plan based on strategies suggested by the tool along with some of his own ideas. The completed survey and plan were returned to the researchers.

Use of the tool revealed the following knowledge creation/sharing deficiencies in the project manager’s environment:

- Inadequate personal knowledge.

- Ineffective externalization

- Inadequate standardization (objectivation)

Strategies suggested by the tool include:

- An internet portal to promote knowledge capture and sharing. This included discussion forums, areas to capture and discuss best practices etc.

- Role playing workshops to reveal how processes worked in practice (i.e. surface tacit knowledge).

Based on the above, the authors suggest that:

- Technology can be used to promote support knowledge sharing and standardization, not just storage.

- Interventions that make tacit knowledge explicit can be helpful.

- As a side benefit, they note that the survey has raised consciousness about knowledge creation/sharing within the team.

Reflections and Conclusions

In my opinion, the value of the paper lies not in the model or the survey tool, but the conceptual framework that underpins them – namely, the idea knowledge depends on, and is shaped by, the social environment in which it evolves. Perhaps an example might help clarify what this means. Consider an organisation that decides to implement project management “best practices” as described by <fill in any of the popular methodologies here>. The wrong way to do this would be to implement practices wholesale, without regard to organizational culture, norms and pre-existing practices. Such an approach is unlikely to lead to the imposed practices taking root in the organisation. On the other hand, an approach that picks the practices that are useful and tailors these to organizational needs, constraints and culture is likely to meet with more success. The second approach works because it attempts to bridge gap between the “ideal best practice” and social reality in the organisation. It encourages employees to adapt practices in ways that make sense in the context of the organization. This invariably involves modifying practices, sometimes substantially, creating new (socially constructed!) knowledge in the bargain.

Another interesting point the authors make is that several knowledge sharing heuristics (130, I think the number was) could be classified unambiguously under one of the processes in the model. This suggests that the model is a reasonable view of the knowledge creation/sharing process. If one accepts this conclusion, then the model does indeed provide a common framework for discussing issues relating knowledge creation in project environments. Further, the associated heuristics can help identify processes that don’t work well.

I’m unable to judge the usefulness of the survey-based tool developed by the authors because they do not provide much detail about it in the paper. However, that isn’t really an issue; the field of project management has too many “tools and techniques” anyway. The key message of the paper, in my opinion, is the that every project has a unique context, and that the techniques used by others have to be interpreted and applied in ways that are meaningful in the context of the particular project. The paper is an excellent counterpoint to the methodology-oriented practice of knowledge management in projects; it should be required reading for methodologists and project managers who believe that things need to be done by The Book, regardless of social or organizational context.