Archive for the ‘Risk analysis’ Category

The Flaw of Averages – a book review

Introduction

I’ll begin with an example. Assume you’re having a dishwasher installed in your kitchen. This (simple?) task requires the services of a plumber and an electrician, and both of them need to be present to complete the job. You’ve asked them to come in at 7:30 am. Going from previous experience, these guys are punctual 50% of the time. What’s the probability that work will begin at 7:30 am?

At first sight, it seems there’s a 50% chance of starting on time. However, this is incorrect – the chance of starting on time is actually 25%, the product of the individual probabilities for each of the tradesmen. This simple example illustrates the central theme of a book by Sam Savage entitled, The Flaw of Averages: Why We Underestimate Risk in the Face of Uncertainty. This post is a detailed review of the book.

The key message that Savage conveys is that uncertain quantities cannot be represented by single numbers, rather they are a range of numbers each with a different probability of occurrence. Hence such quantities cannot be manipulated using standard arithmetic operations. The example mentioned in the previous paragraphs illustrate this point. This is well known to those who work with uncertain numbers (actuaries, for instance), but is not so well understood by business managers and decision makers. Hence the executive who asks his long-suffering subordinate to give him a projected sales figure for next month, with the quoted number then being taken as the 100% certain figure. Sadly such stories are more the norm than the exception, so it is clear that there is a need for a better understanding of how uncertain quantities should be interpreted. The main aim of the book is to help those with little or no statistical training achieve that understanding.

Developing an intuition for uncertainty

Early in the book, Savage presents five tools that can be used to develop a feel for uncertainty. He refers to these tools as mindles – or mind handles. His five mindles for uncertainty are:

- Risk is in the eye of the beholder, uncertainty isn’t. Basically this implies that uncertainty does not equate to risk. An uncertain event is a risk only if there is a potential loss or gain involved. See my review of Douglas Hubbard’s book on the failure of risk management for more on risk vs. uncertainty.

- An uncertain quantity is a shape (or a distribution of numbers) rather than a single number. The broadness of the shape is a measure of the degree of uncertainty. See my post on the inherent uncertainty of project task estimates for an intuitive discussion of how a task estimate is a shape rather than a number.

- A combination of several uncertain numbers is also a shape, but the combined shape is very different from those of the individual uncertainties. Specifically, if the uncertain quantities are independent, the combined shape can be narrower (i.e. less uncertain) than that of the individual shapes. This provides the justification for portfolio diversification, which tells us not to put all our money on one horse, or eggs in one basket etc. See my introductory post on Monte Carlo simulations to see an example of how multiple uncertain quantities can combine in different ways.

- If the individual uncertain quantities (discussed in the previous point) aren’t independent, the overall uncertainty can increase or decrease depending on whether the quantities are positively or negatively related. The nature of the relationship (positive or negative) can be determined from a scatter plot of the quantities. See my post on simulation of correlated project tasks for examples of scatter plots. The post also discusses how positive relationships (or correlations) can increase uncertainty.

- Plans based on average numbers are incorrect on average. Using average numbers in plans usually entails manipulating them algebraically and/or plugging them into functions. Savage explains how the form of the function can lead to an overestimation or underestimation of the planned value. Although this sounds a somewhat abstruse, the basic idea is simple: manipulating an average number using mathematical operations will amplify the error caused by the flaw of averages.

Savage explains the above concepts using simple arithmetic supplemented with examples drawn from a range of real-life business problems.

The two forms of the flaw of averages

The book makes a distinction between two forms of the flaw of averages. In its first avatar, the flaw states that the combined average of two uncertain quantities equals the sum of their individual averages, but the shape of the combined uncertainty can be very different from the sum of the individual shapes (Recall that an uncertain number is a shape, but its average is a number). Savage calls this the weak form of the flaw of averages. The weak form applies when one deals with uncertain quantities directly. An example of this is when one adds up probabilistic estimates for two independent project tasks with no lead or lag between them. In this case the average completion time is the sum of the average completion times for the individual tasks, but the shape of the distribution of the combined tasks does not resemble the shape of the individual distributions. The fact that the shape is different is a consequence of the fact that probabilities cannot be “added up” like simple numbers. See the first example in my post on Monte Carlo simulation of project tasks for an illustration of this point.

In contrast, when one deals with functions of uncertain quantities, the combined average of the functions does not equal the sum of the individual averages. This happens because functions “weight” random variables in a non-uniform manner, thereby amplifying certain values of the variable. An example of this is where we have two sequential tasks with an earliest possible start time for the second. The earliest possible start time for the second task introduces a nonlinearity in cases where the first task finishes early (essentially because there is a lag between the finish of the first task and the start of the second in this situation). The constraint causes the average of the combined tasks to be greater than the sum of the individual averages. Savage calls this the strong form of the flaw of averages. It applies whenever one deals with nonlinear functions of uncertain variables. See the second example in my post on Monte Carlo simulation of multiple project tasks for an illustration of this point.

Much of the book presents real-life illustrations of the two forms of the flaw in risk assessment, drawn from finance to the film industry and from petroleum to pharmaceutical supply chains. He also covers the average-based abuse of statistics in discussions on topical “hot-button” issues such as climate change and health care.

De-jargonising statistics

A layperson-friendly feature of the book is that it explains statistical terms in plain English. As an example, Savage spends an entire chapter demystifying the term correlation using scatter plots . Another term that he explains is the Central Limit Theorem (CLT), which states that the sum of independent random variables resembles the Normal (or bell-shaped) distribution. A consequence of CLT is that one can reduce investment risk by diversifying one’s investments – i.e. making several (small) independent investments rather than a single (large) one – this is essentially mindle # 3 discussed earlier.

Decisions, decisions

Towards the middle of the book, Savage makes a foray into decision theory, focusing on the concept of value of information. Since decisions are (or should be) made on the basis of information, one needs to gather pertinent information prior to making a decision. Now, information gathering costs money (and time, which translates to money). This brings up the question as to how much should one spend in collecting information relevant to a particular decision? It turns out that in many cases one can use decision theory to put a dollar value on a particular piece of information. Surprisingly it turns out that organisations often over-spend in gathering irrelevant information. Savage spends a few chapters discussing how one can compute the value of information based on simple techniques of decision theory. As interesting as this section is, however, I think it is a somewhat disconnected from the rest of the book.

Curing the flaw: SIPs, SLURPS and Probability Management

The last part of the book is dedicated to outlining a solution (or as Savage calls it, a cure) to average-based – or flawed – statistical thinking. The central idea is to use pre-generated libraries of simulation trials for variables of interest. Savage calls such a packaged set of simulation trials a Stochastic Information Packet (SIP). Here’s an example of how it might work in practice:

Most business organisations worry about next year’s sales. Different divisions in the organisation might forecast sales using different of techniques. Further, they may use these forecasts as the basis for other calculations (such as profit and expenses for example). The forecasted numbers cannot be compared with each other because each calculation is based on different simulations or worse, different probability distributions. The upshot of this is that forecasted sales results can’t be combined or even compared. The problem can be avoided if everyone in the organisation uses the same SIP for forecasted sales. The results of calculations can be compared, and even combined, because they are based on the same simulation.

Calculations that are based on the same SIP (or set of SIPs) form a set of simulations that can be combined and manipulated using arithmetic operations. Savage calls such sets of simulations, Scenario Library Units with Relationships Preserved (or SLURPS). The name reflects the fact that each of the calculations is based on the same set of sales scenarios (or results of simulation trials). Regarding the terminology: I’m not a fan of laboured acronyms, but concede that they can serve as a good mnemonics.

The proposed approach ensures that the results of the combined calculations will avoid the flaw of averages,and exhibit the correct statistical behaviour. However, it assumes that there is an organisation-wide authority responsible for generating and maintaining appropriate SIPs. This authority – the probability manager – will be responsible for a “database” of SIPs that covers all uncertain quantities of interest to the business, and make these available to everyone in the organisation who needs to use them. To quote from the book, probability management involves:

…a data management system in which the entities being managed are not numbers, but uncertainties, that is, probability distributions. The central database is a Scenario Library containing thousands of potential future values of uncertain business parameters. The library exchanges information with desktop distribution processors that do for probability distributions what word processors did for words and what spreadsheets did for numbers.

Savage sees probability management as a key step towards managing uncertainty and risk in a coherent manner across organisations. He mentions that some organizations that have already started down this route (Shell and Merck, for instance). The book can thus also be seen as a manifesto for the new discipline of probability management.

Conclusion

I have come across the flaw of averages in various walks of organizational life ranging from project scheduling to operational risk analysis. Most often, the folks responsible for analysing uncertainty are aware of the flaw, and have the requisite knowledge of statistics to deal with it. However, such analyses can be hard to explain to those who lack this knowledge. Hence managers who demand a single number. Yes, such attitudes betray a lack of understanding of what uncertain numbers are and how they can be combined, but that’s the way it is in most organizations. The book is directed largely to that audience.

To sum up: the book is an entertaining and informative read on some common misunderstandings of statistics. Along the way the author translates many statistical principles and terms from “jargonese” to plain English. The book deserves to be read widely, especially by those who need it the most: managers and other decision-makers who need to understand the arithmetic of uncertainty.

Bayes Theorem for project managers

Introduction

Projects are fraught with uncertainty, so it is no surprise that the language and tools of probability are making their way into project management practice. A good example of this is the use of Monte Carlo methods to estimate project variables. Such tools enable the project manager to present estimates in terms of probabilities (e.g. there’s a 90% chance that a project will finish on time) rather than illusory certainties. Now, it often happens that we want to find the probability of an event occurring given that another event has occurred. For example, one might want to find the probability that a project will finish on time given that a major scope change has already occurred. Such conditional probabilities, as they are referred to in statistics, can be evaluated using Bayes Theorem. This post is a discussion of Bayes Theorem using an example from project management.

Bayes theorem by example

All project managers want to know whether the projects they’re working on will finish on time. So, as our example, we’ll assume that a project manager asks the question: what’s the probability that my project will finish on time? There are only two possibilties here: either the project finishes on (or before) time or it doesn’t. Let’s express this formally. Denoting the event the project finishes on (or before) time by , the event the project does not finish on (or before) time by

and the probabilities of the two by

and

respectively, we have:

……(1),

Equation (1) is simply a statement of the fact that the sum of the probabilities of all possible outcomes must equal 1.

Fig 1. is a pictorial representation of the two events and how they relate to the entire universe of projects done by the organisation our project manager works in. The rectangular areas and

represent the on time and not on time projects, and the sum of the two areas,

, represents all projects that have been carried out by the organisation.

In terms of areas, the probabilities quoted above can be expressed as:

……(2),

and

……(3).

This also makes explicit the fact that the sum of the two probabilities must add up to one.

Now, there are several variables that can affect project completion time. Let’s look at just one of them: scope change. Let’s denote the event “there is a major change of scope” by and the complementary event (that there is no major change of scope) by

.

Again, since the two possibilities cover the entire spectrum of outcomes, we have:

……(4).

Fig 2. is a pictorial representation of by and

.

The rectangular areas and

represent the projects that have undergone major scope changes and those that haven’t respectively.

……(5),

and

……(6).

Clearly we also have since the number of projects completed is a fixed number, regardless of how it is arrived at.

Now things get interesting. One could ask the question: What is the probability of finishing on time given that there has been a major scope change? This is a conditional probability because it represents the likelihood that something will happen (on-time completion) on the condition that something else has already happened (scope change).

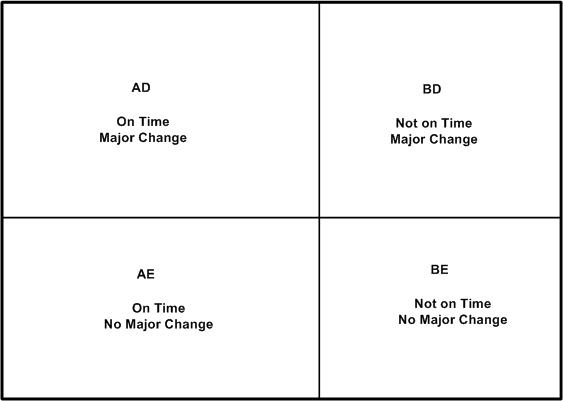

As a first step to answering the question posed in the previous paragraph, let’s combine the two events graphically. Fig 3 is a combination of Figs 1 and 2. It shows four possible events:

- On Time with Major Change (

,

) – denoted by the rectangular area

in Fig 3.

- On Time with No Major Change (

,

) – denoted by the rectangular area

in Fig 3.

- Not On Time with Major Change (

,

) – denoted by the rectangular area

in Fig 3.

- Not On Time with No Major Change (

, $\tilde latex C$) – denoted by the rectangular area

in Fig 3.

We’re interested in the probability that the project finishes on time given that it has suffered a major change in scope. In the notation of conditional probability, this is denoted by . In terms of areas, this can be expressed as

……(7) ,

since (or equivalently

) represent all projects that have undergone a major scope change.

Similarly, the conditional probability that a project has undergone a major change given that it has come in on time, , can be written as:

……(8) ,

since .

Now, what I’m about to do next may seem like pointless algebraic jugglery, but bear with me…

Consider the ratio of the area to the big outer rectangle (whose area is

) . This ratio can be expressed as follows:

……(9).

This is simply multiplying and dividing by the same factor ( in the second expression and

in the third.

Written in the notation of conditional probabilities, the second and third expressions in (9) are:

……(10),

which is Bayes theorem.

From the above discussion, it should be clear that Bayes theorem follows from the definition of conditional probability.

We can rewrite Bayes theorem in several equivalent ways:

……(11),

or

……(12),

where the denominator in (12) follows from the fact that a project that undergoes a major change will either be on time or will not be on time (there is no other possibility).

A numerical example

To complete the discussion, let’s look at a numerical example.

Assume our project manager has historical data on projects that have been carried out within the organisation. On analyzing the data, the PM finds that 60% of all projects finished on time. This implies:

……(13),

and

……(13),

Let us assume that our organisation also tracks major changes made to projects in progress. Say 50% of all historical projects are found to have major changes. This implies:

……(15).

Finally, let us assume that our project manager has access to detailed data on successful projects, and that an analysis of this data shows that 30% on time projects have undergone at least one major scope change. This gives:

……(16).

Equations (13) through (16) give us the numbers we need to calculated using Bayes Theorem. Plugging the numbers in equation (11), we get:

……(16)

So, in this organisation, if a project undergoes a major change then there’s a 36% probability that it will finish on time. Compare this to the 60% (unconditional) probability of finishing on time. Bayes theorem enables the project manager to quantify the impact of change in scope on project completion time, providing the relevant historical data is available. The italicised bit in the previous sentence is important; I’ll have more to say about it in the concluding section.

In closing this section I should emphasise that although my discussion of Bayes theorem is couched in terms of project completion times and scope changes, the arguments used are general. Bayes theorem holds for any pair of events.

Concluding remarks

It should be clear that the probability calculated in the previous section is an extrapolation based on past experience. In this sense, Bayes Theorem is a formal statement of the belief that one can predict the future based on past events. This goes beyond probability theory; it is an assumption that underlies much of science. It is important to emphasise that the prediction is based on enumeration, not analysis: it is solely based on ratios of the number of projects in one category versus the other; there is no attempt at finding a causal connection between the events of interest. In other words, Bayes theorem suggests there is a correlation between major changes in scope and delays, but it does not tell us why. The latter question can be answered only via a detailed study which might culminate in a theory that explains the causal connection between changes in scope and completion times.

It is also important to emphasise that data used in calculations should be based on events that akin to the one at hand. In the case of the example, I have assumed that historical data is for projects that resemble the one the project manager is working on. This assumption must be validated because there could be situations in which a major change in scope actually reduces completion time (when the project is “scoped-down”, for instance). In such cases, one would need to ensure that the numbers that go into Bayes theorem are based on historical data for “scoped-down” projects only.

To sum up: Bayes theorem expresses a fundamental relationship between conditional probabilities of two events. Its main utility is that it enables us to make probabilistic predictions based on past events; something that a project manager needs to do quite often. In this post I’ve attempted to provide a straightforward explanation of Bayes theorem – how it comes about and what its good for. I hope I’ve succeeded in doing so. But if you’ve found my explanation confusing, I can do no better than to direct you to a couple of excellent references.

Recommended Reading

- An Intuitive (and short) explanation of Bayes Theorem – this is an excellent and concise explanation by Kalid Azad of Better Explained.

- An intuitive explanation of Bayes Theorem – this article by Eliezer Yudkowsky is the best explanation of Bayes theorem I’ve come across. However, it is very long, even by my verbose standards!

Communicating risks using the Improbability Scale

It can be hard to develop an intutitive feel for a probability that is expressed in terms of a single number. The main reason for this is that a numerical probability, without anything to compare it to, may not convey a sense of how likely (or unlikely) an event is. For example, the NSW Road Transport Authority tells us that 0.97% of the registered vehicles on the road in NSW in 2008 were involved in at least one accident. Based on this, the probability that a randomly chosen vehicle will be involved in an accident over a period of one year is 0.0097. Although this number suggests the risk is small, it begs the question: how small? How does it compare to the probability of other, known events? In a short paper entitled, The Improbability Scale, David Ritchie outlines how to make this comparison in an inituitively appealing way.

Ritchie defines the Improbability Scale, , as:

where is the probability of the event.

By definition, is 0 for absolutely certain events (

), and increases as

decreases. The advantage of using

(as opposed to

) is that, in most case,

, will be a number between 0 and 10. An

of 10 corresponds to a probability of 0.0000000001, which is so small that the event it refers to is practically impossible.

Let’s look at the improbability of some events expressed in terms of .

- Rolling a six on the throw of a die.

= 1/6;

= 0.8.

- Picking a specific card (say the 10 of diamonds) from a pack (wildcards excluded).

= 1/52;

= 1.7.

- A (particular) vehicle being involved in at least one accident in the Australian state of NSW over a period of one year (the example quoted in the in the first paragraph).

= .0097;

= 2.0.

- One’s birthday occurring on a randomly picked day of the year.

= 1/365;

= 2.6.

- Probability of getting 10 heads in 10 consecutive coin tosses.

=

(or 0.00098 );

= 3

- Drawing 5 sequential cards of the same suit from a complete deck (a straight flush).

= 0.0000139;

= 4.9 (Note: This can be calculated by dividing the total number of sequential 5 card hands and dividing it by the total number of 5 card hands from a deck of 52. I’m too lazy to do the calculation myself, but it’s explained in this Wikipedia article if you’re interested. )

- Being struck by lightning in Australia.

= 1/1600000;

= 6.2. (source: this article from Australian Geographic – the article doesn’t say over what period, but I reckon it’s per year)

- Winning the Oz Lotto Jackpot.

=

;

= 7.7 (based on odds from NSW lotteries for a single game)

Apart from clarifying the risk of a traffic accident, this tells me (quite unambiguously!) that I must stop buying lottery tickets.

A side benefit of the improbability scale is that it eases the tasks of calculating the probability of combined events. If two events are independent, the probability that they will occur together is given by the product of their individual probabilities of occurrence. Since the logarithm of a product of two number equals the sum of the numbers, for the combined event is obtained by adding their individual

values. So the

for throwing a six and drawing a specific card from a deck is 2.5 (that is, 0.8+1.7), making it more unlikely than being involved in a vehicle accident. That certainly puts both probabilities in perspective.

In short: the improbability scale offers a nice way to understand the likelihood of an event occuring in comparison to other events. In particular, the examples discussed above show how it can be used to illustrate and communicate the likelihood of risks in a vivid and intuitive manner.