Archive for the ‘Project Management’ Category

A thin veneer of process

Some time back I published a post arguing that much of the knowledge relating to organizational practices is tacit – i.e. it is impossible to capture in writing or speech. Consequently, best practices and standards that purportedly codify “best of breed” organizational practices are necessarily incomplete: they do not (and cannot) detail how a practice should be internalised and implemented in specific situations.

For a best practice to be successful, it has to be understood and moulded in a way that makes sense in the working culture and environment of the implementing organisation. One might refer to this process as “adaptation” or “customization”, but it is much more than minor tweaking of a standard process or practice. Tacit knowledge relates to the process of learning, or getting to know. This necessarily differs from individual to individual, and can’t be picked up by reading best practice manuals. Building tacit knowledge takes time and, therefore, so does the establishment of new organizational processes. Consequently, there is a lot of individual on-the-job learning and tinkering before a newly instituted procedure becomes an organizational practice.

This highlights a gap between how practices are implemented and how they actually work. All too often, an organisation will institute a project to implement a best practice – say a quality management methodology – and declare success as soon as the project is completed. Such a declaration is premature because the new practice is yet to take root in the organisation. This common approach to best practice implementation does not allow enough time for the learning and dialogue that is so necessary for the establishment of an organizational practice. The practice remains “a thin veneer of process” that peels off all too easily.

Yet, despite the fact that it does not work, the project-oriented approach remains popular. Why is this so? I believe this happens because decision-makers view the implementation of best practices as a purely technical problem – practices are seen as procedures that can be grafted upon the organization without due regard to culture or context and environment or ethics. When culture, context and people are considered as incidental, practices are reduced to their mechanical (or bureaucratic) elements – those that can be captured in documents, workflow diagrams and forms. These elements are tangible so implementers can point to these as “proof” that the processes have been implemented.

Hence the manager who says: “We have rolled out our new project management system and all users have undergone training. The implementation of the new methodology has been completed. ”

Sorry, but it has just begun. Success – if it comes at all – will take a lot more time and effort.

So how should best practice implementations be approached?

It should be clear that a successful implementation cannot come from a cookbook approach that follows textbook or consultant “recipes.” Rather, it involves the following:

- Extensive adaptation of techniques to suit the context and environment of the organisation.

- Involvement of the people who will work with and be affected the processes. This often goes under the banner of “buy-in”, but it is more than that: these people must have a say in what adaptations are made and how they are made. But even before they do that, they must be allowed to play with the process – to tinker – so that they can improve their understanding of its intent and working.

- An understanding that the process is not cast in stone – that it must be modified as employees gain insights into how the process can be improved.

All these elements tie into the idea that practices and procedures involve tacit knowledge that sits in people’s heads. The visible, or explicit, aspects – which are often mistaken for the practice – are but a thin veneer of process.

So, in conclusion, the technical implementation of a best practice is only the beginning – it is the start of the real work of internalizing the practice through learning required to sustain and support it.

Value judgements in system design

Introduction

How do we choose between competing design proposals for information systems? In principle this should be done using an evaluation process based on objective criteria. In practice, though, people generally make choices based on their interests and preferences. These can differ widely, so decisions are often made on the basis of criteria that do not satisfy the interests of all stakeholders. Consequently, once a system becomes operational, complaints from stakeholder groups whose interests were overlooked are almost inevitable (Just think back to any system implementation that you were involved in…).

The point is: choosing between designs is not a purely technical issue; it also involves value judgements – what’s right and what’s wrong – or even, what’s good and what’s not. Problem is, this is a deeply personal matter – different folks have different values and, consequently, differing ideas of which design ideal is best (Note: the term ideal refers to the value judgements associated with a design). Ten years ago, Heinz Klein and Rudy Hirschheim addressed this issue in a landmark paper entitled, Choosing Between Competing Design Ideals in Information Systems Development. This post is a summary of the main ideas presented in their paper.

Design ideals and deliberation

A design ideal is not an abstract, philosophical concept. The notion of good and bad or right and wrong can be applied to the technical, economic and social aspects of a system. For example, a choice between building and buying a system has different economic and social consequences for stakeholder groups within and outside the organisation. What’s more, the competing ideals may be in conflict – developers employed in the organisation would obviously prefer to build rather than buy because their employment depends on it; management, however, may have a very different take on the issue.

The essential point that Hirschheim and Klein make is that differences in values can be reconciled only through honest discussion. They propose a deliberative approach wherein all stakeholders discuss issues in order to come to an agreement. To this end, they draw on the theories of argumentation and communicative rationality to come up with a rational framework for comparing design ideals. Since these terms are new, I’ll spend a couple of paragraphs in describing them briefly.

Argumentation is essentially reasoned debate – i.e. the process of reaching conclusions via arguments that use informal logic – which, according to the definition in the foregoing link, is the attempt to develop a logic to assess, analyse and improve ordinary language (or “everyday”) reasoning. Hirschheim and Klein use the argumentation framework proposed by Stephen Toulmin, to illustrate their approach.

The basic premise of communicative rationality is that rationality (or reason) is tied to social interactions and dialogue. In other words, the exercise of reason can occur only through dialogue. Such communication, or mutual deliberation, ought to result in a general agreement about the issues under discussion. Only once such agreement is achieved can there be a consensus on actions that need to be taken. See my article on rational dialogue in project environments for more on communicative rationality.

Obstacles to rational dialogue and how to overcome them

The key point about communicative rationality is that it assumes the following conditions hold:

- Inclusion: includes all stakeholders.

- Autonomy: all participants should be able to present and criticise views without fear of reprisals.

- Empathy: participants must be willing to listen to and understand claims made by others.

- Power neutrality: power differences (levels of authority) between participants should not affect the discussion.

- Transparency: participants must not indulge in strategic actions (i.e. lying!).

Clearly these are idealistic conditions, difficult to achieve in any real organisation. Klein and Hirschheim acknowledge this point, and note the following barriers to rationality in organisational decision making:

- Social barriers: These include inequalities (between individuals) in power, status, education and resources.

- Motivational barriers: This refers to the psychological cost of prolonged debate. After a period of sustained debate, people will often cave in just to stop arguing even though they may have the better argument.

- Economic barriers: Time is money: most organisations cannot afford a prolonged debate on contentious issues.

- Personality differences: How often is it that the most charismatic or articulate person gets their way, and the quiet guy in the corner (with a good idea or two) is completely overlooked?

- Linguistic barriers: This refers to the difficulty of formulating arguments in a way that makes sense to the listener. This involves, among other things, the ability to present ideas in a way that is succinct, without glossing over the important issue – a skill not possessed by many.

These barriers will come as no surprise to most readers. It will be just as unsurprising that it is difficult to overcome them. Klein and Hirschheim offer the usual solutions including:

- Encourage open debate – They suggest the use of technologies that support collaboration. They can be forgiven for their optimism given that the paper was written a decade ago, but the fact of the matter is that all the technologies that have sprouted since have done little to encourage open debate.

- Implement group decision techniques – these include arrangements such as quality circles, nominal groups and constructive controversy. However, these too will not work unless people feel safe enough to articulate their opinions freely.

Even though the barriers to open dialogue are daunting, it behooves system designers to strive towards reducing or removing them. There are effective ways to do this, but that’s a topic I won’t go into here as it has been dealt with at length elsewhere.

Principles for arguments about value judgements

So, assuming the environment is right, how should we debate value judgements? Klein and Hirschheim recommend using informal logic supplemented with ethical principles. Let’s look at these two elements briefly.

Informal logic is a means to reason about human concerns. Typically, in these issues there is no clear cut notion of truth and falsity. Toulmin’s argumentation framework (mentioned earlier in this post) tells us how arguments about such issues should be constructed. It consists of the following elements:

- Claim: A statement that one party asks another to accept as true. An example would be my claim that I did not show up to work yesterday because I was not well.

- Data (Evidence): The basis on which one party expects to other to accept a claim as true. To back the claim made in the previous line, I might draw attention to my runny nose and hoarse voice.

- Warrant: The bridge between the data and the claim. Again, using the same example, a warrant would be that I look drawn today, so it is likely that I really was sick yesterday.

- Backing: Further evidence, if the warrant should prove insufficient. If my boss is unconvinced by my appearance he may insist on a doctor certificate.

- Qualifier: These are words that express a degree of certainty about the claim. For instance, to emphasise just how sick I was, I might tell my boss that I stayed in bed all day because I had high fever.

This is all quite theoretical: when we debate issues we do not stop to think whether a statement is a warrant or a backing or something else; we just get on with the argument. Nevertheless, knowledge of informal logic can help us construct better arguments for our positions. Further, at the practical level there are computer supported deliberative techniques such as argument mapping and dialogue mapping which can assist in structuring and capturing such arguments.

The other element is ethics: Klein and Hirschheim contend that moral and ethical principles ought to be considered when value judgements are being evaluated. These principles include:

- Ought implies can – which essentially means that one morally ought to do something only if one can do it (see this paper for an interesting counterview of this principle). Taking the negation of this statement, one gets “Cannot implies ought not” which means that a design can be criticised if it involves doing something that is (demonstrably) impossible – or makes impossible demands on certain parties.

- Conflicting principles – This is best explained via an example. Consider a system that saves an organisation money but involves putting a large number of people out of work. In this case we would have an economic principle coming up against a social one. According to the principle, a design ideal based on an economic imperative can be criticised on social grounds.

- The principle of reconsideration – This implies reconsidering decisions if relevant new information becomes available. For example, if it is found that a particular design overlooked a certain group of users, then the design should be reconsidered in the light of their needs.

They also mention that new ethical and moral theories may trigger the principle of reconsideration. In my opinion, however, this is a relatively rare occurrence: how often are new ethical or moral theories proposed?

Summarising

The main point made by the authors is that system design involves value judgements. Since these are largely subjective, open debate using the principles of informal logic is the best way to deal with conflicting values. Moreover, since such issues are not entirely technical, one has to use ethical principles to guide debate. These principles – not asking people to do the impossible; taking everyone’s interests into account and reconsidering decisions in the light of new information – are reasonable if not self-evident. However, as obvious as they are, they are often ignored in design deliberations. Hirschheim and Klein do us a great service by reminding us of their importance.

Capturing decision rationale on projects

Introduction

Most knowledge management efforts on projects focus on capturing what or how rather than why. That is, they focus on documenting approaches and procedures rather than the reasons behind them. This often leads to a situation where folks working on subsequent projects (or even the same project!) are left wondering why a particular technique or approach was favoured over others. How often have you as a project manager asked yourself questions like the following when browsing through a project archive?

- Why did project decision-makers choose to co-develop the system rather than build it in-house or outsource it?

- Why did the developer responsible for a module use this approach rather than that one?

More often than not, project archives are silent on such matters because the reasoning behind decisions isn’t documented. In this post I discuss how the Issue Based Information System (IBIS) notation can be used to fill this “rationale gap” by capturing the reasoning behind project decisions.

Note: Those unfamiliar with IBIS may want to have a browse of my post on entitled what and whence of issue-based information systems for a quick introduction to the notation and its uses. I also recommend downloading and installing Compendium, a free software tool that can be used to create IBIS maps.

Example 1: Build or outsource?

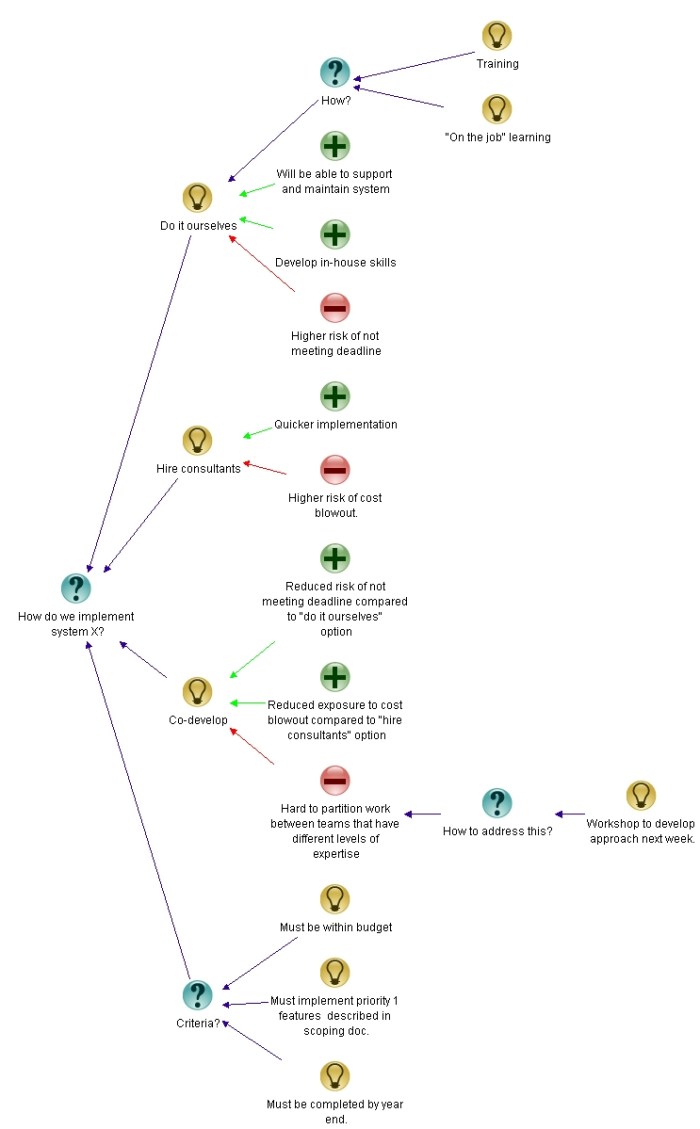

In a post entitled The Approach: A dialogue mapping story, I presented a fictitious account of how a project team member constructed an IBIS map of a project discussion (Note: dialogue mapping refers to the art of mapping conversations as they occur). The issue under discussion was the approach that should be used to build a system.

The options discussed by the team were:

- Build the system in-house.

- Outsource system development.

- Co-develop using a mix of external and internal staff.

Additionally, the selected approach had to satisfy the following criteria:

- Must be within a specified budget.

- Must implement all features that have been marked as top priority.

- Must be completed within a specified time

The post details how the discussion was mapped in real-time. Here I’ll simply show the final map of the discussion (see Figure 1).

Although the option chosen by the group is not marked (they chose to co-develop), the figure describes the pros and cons of each approach (and elaborations of these) in a clear and easy-to-understand manner. In other words, it maps the rationale behind the decision – a person looking at the map can get a sense for why the team chose to co-develop rather than use any of the other approaches.

Example 2: Real-time updates of a data mart

In another post on dialogue mapping I described how IBIS was used to map a technical discussion about the best way to update selected tables in a data mart during business hours. For readers who are unfamiliar with the term: data marts are databases that are (generally) used purely for reporting and analysis. They are typically updated via batch processes that are run outside of normal business hours. The requirement to do real-time updates arose from a business need to see up-to-the-minute reports at specified times during the financial year.

Again, I’ll refer the reader to the post for details, and simply present the final map (see Figure 2).

Since there are a few technical terms involved, here’s a brief rundown of the options, lifted straight from my earlier post (Note: feel free skip this detail – it is incidental to the main point of this post) :

- Use our messaging infrastructure to carry out the update.

- Write database triggers on transaction tables. These triggers would update the data mart tables directly or indirectly.

- Write custom T-SQL procedures (or an SSIS package) to carry out the update (the database is SQL Server 2005).

- Run the relevant (already existing) Extract, Transform, Load (ETL) procedures at more frequent intervals – possibly several times during the day.

In this map the option chosen by the group decision is marked out – it was decided that no additional development was needed; the “real-time” requirement could be satisfied simply by running existing update procedures during business hours (option 4 listed above).

Once again, the reasoning behind the decision is easy to see: the option chosen offered the simplest and quickest way to satisfy the business requirement, even though the update was not really done in real-time.

Summarising

The above examples illustrate how IBIS captures the reasoning behind project decisions. It does so by:

- Making explicit all the options considered.

- Describing the pros and cons of each option (and elaborations thereof).

- Providing a means to explicitly tag an option as a decision.

- Optionally, providing a means to link out to external source (documents, spreadsheets, urls). In the second example I could have added clickable references to documents elaborating on technical detail using the external link capability of Compendium.

Issue maps (as IBIS maps are sometimes called) lay out the reasoning behind decisions in a visual, easy-to-understand way. The visual aspect is important – see this post for more on why visual representations of reasoning are more effective than prose.

I’ve used IBIS to map discussions ranging from project approaches to mathematical model building, and have found them to be invaluable when asked questions about why things were done in a certain way. Just last week, I was able to answer a question about variables used in a market segmentation model that I built almost two years ago – simply by referring back to the issue map of the discussion and the notes I had made in it.

In summary: IBIS provides a means to capture decision rationale in a visual and easy-to-understand way, something that is hard to do using other means.