The influence of related disciplines on project management practice

Introduction

Project management is a relatively new discipline; one that has been formalized only in the last half century or so. Consequently, both academics and practitioners routinely draw upon knowledge in allied (or related) disciplines in order to advance the theory and practice of project management. Given this, it is of interest to ask: what is the (current and future) influence of other, related disciplines on the profession of project management? A paper by Yoong Kwak and Frank Anbari entitled, Availability-Impact Analysis of Project Management Trends: Perspectives From Allied Disciplines, looks into this question. This post is a summary and review of the paper.

Some terminology and assumptions first. An allied discipline, in the context of this paper, is any discipline that is related to project management- examples of this include Human Resource Management and Information Technology. Availability is the volume of ideas relating to project management in an allied discipline and impact refers to the influence of that research on project management practice. Note that availability and impact are treated as independent variables in the study.

Objectives, methodology and approach

The questions that Kwak and Anbari seek to answer are:

- What trends in allied disciplines could have a significant effect on project management theory and practice?

- How would these trends change (the theory and practice of) project management?

- How would project managers have to change their mind-set because of the impact of these disciplines?

- What actions can be taken to meet the challenges posed by trends in allied disciplines?

Note that I have paraphrased their questions for clarity.

To answer these question Kwak and Anbari surveyed a selected group of project managers and project management researchers, seeking their input on a range of issues relating to the above questions. The surveys also solicited qualitative information through respondents’ opinions on the impact, trends and future of project management. The italics in the previous sentence are intended to highlight the conclusions are based on subjective data gathered from a relatively homogeneous sample – more on this later in the review.

Based on the survey data, the authors:

- Derived and plotted availability-impact relationships for each of the allied disciplines in a 2×2 matrix (in which each of the two variables took on the values ‘High’ and ‘Low’)

- Identified how trends in these disciplines influence project management.

- Conducted a structured survey to solicit opinions on how the project management community can respond to (or take advantage) of these influences.

By reviewing project management research literature, the authors identified the following eight allied areas as being potentially relevant to the future of the discipline:

- Operations Research/Decision Sciences/Operation Management/ Supply-Chain Management (abbreviated as OR/DS/OM/SCM)

- Organizational Behavior/Human Resource Management (abbreviated as OB/HR)

- Information Technology/Information Systems (IT/IS)

- Technology Applications/Innovation/New Product Development/Research and Development (TECH/INNOV/NPD/R&D)

- Engineering and Construction/Contracts/Legal Aspects/Expert Witness(EC/CONTRACT/LEGAL)

- Strategy/Integration/Portfolio Management/Value of Project Management/Marketing (STRATEGY/PPM)

- Performance Management/Earned Value Management/Project Finance and Accounting (PERFORM/EVM)

- Quality Management/Six Sigma/Process Improvement (QM/6SIGMA/PI)

I’m not an academic, and don’t claim to be current with research literature, but I think that psychology and economics ought to have made it to this list.

In the survey questionnaire, the authors asked respondents to rank the above disciplines on a 7 point scale, for the following criteria:

- The availability of project management-related information/knowledge/research in the discipline.

- The impact of the discipline on project management.

The rating was done on an ordinal scale of 1 to 7. Respondents were also asked open ended questions regarding trends in allied disciplines and how the project management community should adapt to or take advantage of these trends.

The authors describe the demographics of the survey population – I won’t go into details of this; please see the paper for details.

Results and Discussion

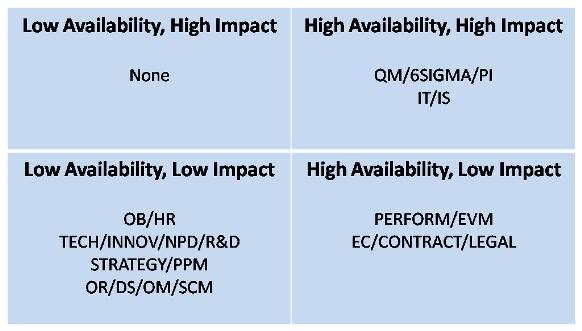

The current availability and impact of allied disciplines on project management – as perceived by the surveyed practitioners and academics – is summarized in Figure 1 and the predicted future availability-impact relationships are shown in Figure 2. I’ll discuss the current situation first.

Current Situation

The current situation is as shown below:

Figure 1: Current availability-impact of allied disciplines

According to the survey data, disciplines in the lower left quadrant are lacking in novel project management-related information and thus have potential for more research. They also do not have much of an impact on the field. In my opinion, even though there may be a lack of research directly related to project management in these areas, there are plenty of papers whose findings can be adapted to project management – see this post from an example drawn from a recent paper on strategy execution. My point: even research that isn’t directly related to project management can be relevant to the field.

Disciplines in lower right quadrant have plenty of research related to project management, but most of this work tends to have a low impact on the field. This seems reasonable– there’s a stack of research dealing with project performance and engineering/construction projects (this observation is based on a quick survey of papers that have appeared in the Project Management Journal over the last two years). Most of this research tends to have little effect on the field – for example, there haven’t been many radically new practices in the area of performance and construction management.

Disciplines in the upper left quadrant lack research but could potentially have a great impact on project management practice. To me this quadrant presents interesting possibilities because it refers to areas which currently have no (or very little) project management-related research but which could, nevertheless, have a high impact on practice. As described in my discussion of the low-low quadrant – a lot of research in other, unrelated fields can be adapted to project management. Based on my readings, I believe behavioural science/psychology (focusing on the individual rather than the group) and economics fall into this category – as examples see this post for an example drawn from psychology and this one for one drawn from economics. Unfortunately these fields are not considered by the authors.

This brings us to the upper right quadrant, which includes quality/process management and information technology. There’s little doubt that in recent years there’s been deluge of project-related research papers published in these areas. It is also clear that these areas have had a high impact on project management practice. However, in my opinion, it is far from clear that the effect of this research has been positive ; if anything it has lead to an unhealthy obsession with process and technology based approaches to project management.

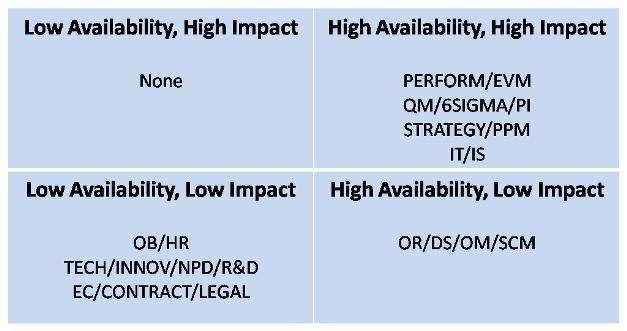

Future situation

Future trends, according to those surveyed, are as depicted in Figure 2.

Figure 2: Future availability-impact of allied disciplines

Let’s look at the disciplines that have moved:

PERFORM/EVM and STRATEGY/PPM have moved up to the high-high quadrant reflecting their (perceived) future importance. However, is this really the case or is it a case of availability bias? The latter is plausible, given the recent flood of papers, articles and talks on topics relating to STRATEGY/PPM in journals and conferences. Practitioners and academics exposed to this constant barrage of information (propaganda?) on the topic cannot but help think that it must be a field of great relevance The anticipated increase in importance of PERFORM/EVM, on the other hand, reflects the belief that project management will become more “metricised” or measurement-oriented. This is no bad thing, providing the metrics are meaningful. In this connection, it is worth looking at Douglas Hubbard’s book on the measurement of intangibles.

OR/DS/OM/SCM has moved from the lower left to the lower right quadrant reflecting the respondents’ perceptions that there will be more project management related research in these areas, but that this research will continue to be of limited relevance to the profession. On the surface, this seems quite plausible – as one of the respondents put it, “The impact of decision sciences on project management was high until the 1960s. Project management had its genesis in Operations Research. However, since the 1970s the relative importance, knowledge and research in this area has been decreasing [in comparison to other fields]…” However, I’m not entirely convinced: case can be made that radical advances in decision sciences may cause a reversal of this trend. The portents are already there – see Hubbard’s work on applied information economics, for example.

EC/CONTRACT/LEGAL has moved from the lower right to the lower left quadrant. I think this is quite possibly correct. Why? Well, because project management, ever since its inception, has been borrowing and adapting much from these areas. It is therefore only natural to expect that this will plateau out (if it hasn’t already) and decrease as time goes on.

A note on relative availability and impact or IT/IS

From an analysis of the raw rankings of the disciplines, the authors infer that IT/IS has, and will continue to have, an availability and impact that is much greater than any other discipline. Presumably this is a consequence of IT/IS being ranked much higher on the 7 point scale than any of the other disciplines. I can’t help but wonder if this is due to a bias in the surveyed population: if one interviews IT project managers or academics specializing in IT, it should be no surprise if they rate the accessibility and importance of technology as being much higher than that of other disciplines. Unfortunately Kwak and Anbari do not give a discipline-wise breakdown of the survey respondents, so I’m unable to judge if this is so.

Opinions of selected respondents

The authors also present detailed opinions of selected respondents. On reading these I found nothing strikingly new. Two academics pleaded for project management to be treated with more respect by other academics – i.e. be “recognized in the management faculty and accorded an equal status to with other traditional management science disciplines.” That academics are concerned about the status of the profession is only natural; whether this “equal status” is desirable is another matter altogether. Another researcher waxed eloquent on the effects of globalization and technology – trends that I think are evident to most practitioners.

The practitioners, on the other hand, focused on currently fashionable areas of practice: quality management/process improvement and portfolio management. There was also a mention of how a “project-based world” was needed in order to respond to “increasing complexity.” The problem is that these terms mean different things to different people, consequently they don’t mean much at all (see this post for more on the confusion regarding the term “complexity” in the context of projects).

Conclusion

The authors end with some general statements that they claim to have derived from the survey data. These can be summarized as follows:

1. OB/HR is becoming increasingly important as much of project work is about managing internal and external relationships. There is a growing recognition (finally!) that projects are more about people than processes.

2. There will be an increasing dependence on software tools to manage projects. (IS/IT)

3, There will be an increasing focus on measuring performance and compliance with regulations and standards (PERFORM/EVM)

4. Portfolio management and quality/process improvement (STRATEGY/PPM and QM/6SIGMA/PI) will continue to get a lot of attention in industry.

5. New tools and techniques will emerge from the intersection between traditional management disciplines (OR/DS/OM/SCM and PERFORM/EVM) and newer ones (IT/IS and TECH/INNOV/NPD/R&D)

It isn’t entirely clear on what basis the authors make the above statements: are they based on: 1) survey responses, 2) research literature or 3) the authors’ opinions? And, if it is the first: can one make the above broad generalisations based on small surveys involving less than 100 respondents ? Perhaps not, I think.

Finally the authors end with this plea

“The project management profession is continuously evolving, so the project management community should be receptive to new ideas and also be sensitive to the yearning (!?) of the public and professional community so as to model project management practices to meet their expectations. “

This is true: the project management “community” remains fixated on classical practices and techniques, many of which have questionable value. A degree of openness to new ideas and practices wouldn’t be amiss.

The paper attempts to gauge the current and future influence of allied fields on the research and practice of project management. It does so by surveying a sample of project management academics and professionals and making inferences based on the collected data. The sample is drawn from a population that is steeped in current practice and theory. As a result the respondents may not be aware of the possibilities offered by fields that are currently not on the “project management radar.” This might explain why the upper left quadrant is empty in both matrices. To get around this the authors could have solicited the opinions of practitioners/theorists from allied disciplines.

To summarise: The authors infer some interesting trends from their data, but there remain some questions about the robustness of the inferences and the generalisations made from them.

Visualising content and context using issue maps – an example based on a discussion of Cox’s risk matrix theorem

Introduction

Some time ago I wrote a post on a paper by Tony Cox which describes some flaws in risk matrices (as they are commonly used) and proposes an axiomatic approach to address some of the problems. In a recent comment on that post, Tony Waisanen suggested that someone take up the challenge to map the content of the post and the ensuing discussion using issue mapping. Hence my motivation to write the present post.

My main aims in this post are to:

- Create an issue map visualising the content of my post on Cox’s paper.

- Incorporate points raised in the comments into the map, and show how they relate to Cox’s arguments.

A quick word about the notation and software before proceeding. I’ll use the IBIS (Issue-based information system) notation to map the argument. Those unfamiliar with IBIS will find a quick introduction here. The mapping is done using Compendium, an open source issue mapping tool (that can do other things too). I’ll provide a commentary as I build the map, because the detail behind the map cannot be seen in the screenshot

First map: the flaws in risk matrices and how to fix them

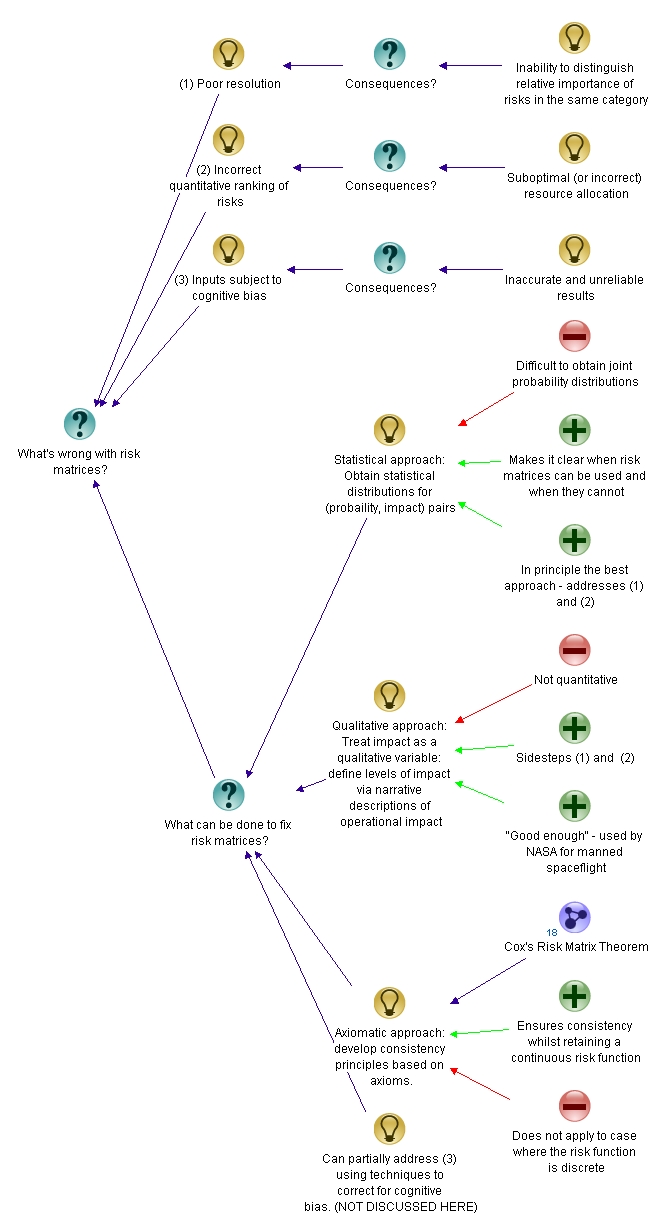

Cox ask’s the question: “What’s wrong with risk matrices?” – this is, in fact, the title of the paper in which he describes his theorem. The question is therefore an excellent starting point for our map.

As an answer to the question, Cox lists the following points as problems/flaws in risk matrices:

- Poor resolution: risk matrices use qualitative categories (typically denoted by colour – red, green, yellow). Risks within a category cannot be distiguished.

- Incorrect ranking of risks: In some cases, risks can end up in the wrong qualitative category – i.e. a quantitatively higher risk can be mistakenly categorised as a low risk and vice versa. In the worst case, this can lead to suboptimal resource allocation – i.e. a lower risk being given a higher priority.

- Subjective inputs: Often, the criteria used to rank risks are based on subjective inputs. Such subjective inputs are prone to cognitive bias. This leads to inaccurate and unreliable risk rankings.

See my posts limitations of scoring methods in risk analysis and cognitive biases as project meta-risks for more on the above points.

The map with the root question, problems (ideas or responses, in IBIS terminology) and their consequences is shown in Figure 1. Note that I’ve put numbers (1), (2) etc. against the points so that I can refer to them by number in other nodes.

The next question suggests itself: we’ve asked “What’s wrong with risk matrices?” so an obvious follow-up question is, “What can be done to fix risk matrices?” There are a few approaches available to address the problems. These are dicussed in my post and the discussion following it. The approaches can be summarised as follows:

- Statistical approach: This involves obtaining the correct statistical distributions for probability of the risk occuring and the impact of the risk. This is generally hard to do because of the lack of data. However, once this is done, it obviates the need for risk matrices. Furthermore, it warns us about situations in which risk matrices may mislead. In Cox’s words, “One (approach) is to consider applications in which there are sufficient data to draw some inferences about the statistical distribution of (Probability, Consequence) pairs. If data are sufficiently plentiful, then statistical and artificial intelligence tools … can potentially be applied to help design risk matrices that give efficient or optimal (according to various criteria) discrete approximations to the quantitative distribution of risks. In such data-rich settings, it might be possible to use risk matrices when they are useful (e.g., if probability and consequence are strongly positively correlated) and to avoid them when they are not (e.g., if probability and consequence are strongly negatively correlated).” This is, in principle, the best approach.

- Qualitative approach: This approach was discussed by Glen Alleman in this comment. It essentially involves characterising impact using qualitative information – i.e. narrative descriptions of impact. To quote from Glen’s comment, “...the numeric value of impacts are replaced by narrative descriptions of the actual operational impacts from the occurrence of the risk. These narratives are developed through analysis of the system…the quantitative risk as a product is abandoned in place of a classification of response to a predefined consequence.” This approach side steps a couple of the issues with risk matrices. Further, many risk aware organisations have used this method with great success (Glen mentions that NASA and the Department of Defense use such an approach to analyse risks on spaceflight/aviation projects)

- Axiomatic approach: This is the approach that Tony Cox discusses in his paper. It has the advantage of being simple – it assumes that the risk function (defined as probability x impact, for example) is continuous whilst also ensuring consistency to the extent possible (i.e. ensuring a correct quantitative ranking of risks). The downside, as Glen emphasises in his comments, is that risk functions are actually discrete, as discussed in (1) above. Cox’s arguments hinge on the continuity of the risk function, so they do not apply to the discrete case.

The map with these approaches added in is depicted in Figure 2. Note that I’ve added Cox’s theorem in as a map node, indicating that a detailed discussion of the theorem is presented in a separate map.

Note also, that I have added an idea node representing how the issue regarding subjective inputs can be addressed. I will not pursue this point further in the present post as it did not come up in the discussion. That said, I have discussed this point in some detail in an article on cognitive bias in project risk management.

Second map: Cox’s risk matrix theorem

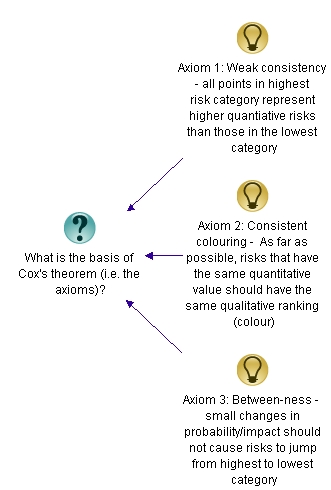

Since the entire discussion is based on Cox’s arguments, it is worth looking into his paper in some detail – in particular, at the axioms and the theorem itself. It is convenient to hive this material off into a separate map, but one connected to the original map (see the map node representing the theorem in Figure 2 above).

The root question of the new map would be, “What is the basis of Cox’s theorem?” Answer: the theorem is based on the axioms and other (tacit) assumptions.

Now, my earlier post on Cox’s theorem contains a very detailed treatment of the axioms, so I’ll offer only a one-line explanation for each here. The axioms are:

- Weak consistency – which states that all risks in the highest category (red) must represent quantitatively higher risks than those in the lowest category (green).

- Consistent colouring – As far as possible, risks with the same quantitative value must have the same colour.

- Between-ness – small changes in probability or impact (i.e. the risk function) should not cause a risk to move from the highest (red) to lowest (green) or vice versa.

The axioms are intuitively appealing – they express a basic consistency that one would expect risk matrices to satisfy. The secondary map, with the three axioms shown is depicted in Figure 3.

Cox’s theorem, which essentially follows from these axioms, can be stated as follows: In a risk matrix that satisfies the three axioms, all cells in the bottom row and left-most column must be green and all cells in the second from bottom row and second from left column must be non-red.

The theorem has two corollaries:

- 2×2 matrices cannot satisfy the theorem.

- 3×3 and 4×4 matrices which satisfy the theorem have a unique colouring scheme.

These are rather surprising conclusions, arrived at from some very intuitive axioms. The secondary map, with the theorem and corollaries added in is shown in Fig. 4.

That completes the map of the theorem. However, in this comment Glen Alleman pointed out that the assumption of a continuous function to describe risk (such as risk = probability x impact, where both quantities on the right hand side are continuous functions) is questionable. He also makes the point the probability is specified by a distribution, and numerical values that come out of distributions cannot be combined via arithmetic operations. The reason that folks make the simplifying assumptions (of continutity and ignoring the probabilistic nature of the variables) is that it is intuitive and easy to work with. As I mentioned in one of my responses to the comments, one can choose to define risk this way although it isn’t logically sound. Cox’s theorem essentially specifies consistency conditions that need to be satisfied when such ad-hoc approaches are used. The map with this discussion included is shown in Figure 5 (click anywhere on figure to view a full-sized image)

That completes the mapping exercise: Figures 2 and 5 represent a fairly complete map of the post and the discussion around it.

Caveats and conclusions

At the risk of belaboring the obvious, the maps represent my interpretation of Cox’s work and my interpretation of others’ comments on my post on Cox’s work. Further, the discussion on which the maps are based is far from comprehensive because it did not cover other limitations of risk matrices. Please see my post on limitations of scoring methods in risk analysis for a detailed discussion of these.

Before closing, it is worth looking at the Figures 2 and 5 from a broader perspective: the figures make clear the context of the discussion in a way that is simply not possible through words. As an example, Figure 2 lays bare the context of Cox’s theorem – it emphasises, for example, that Cox’s approach isn’t the only method to fix what’s wrong with risk matrices. Further, Figure 5 distinguishes between explicitly declared and tacit assumptions. Examples of the former are the three axioms and that of the latter is the assumption of continuity.

In this post I’ve summarised the content and context of Cox’s risk matrix theorem via issue mapping. The maps provide an “at a glance” summary of the theorem alongwith supporting assumptions and axioms. Further, the maps also incorporate key elements of readers’ reaction regarding the post. I hope this example clarifies the content and context of my earlier post on Cox’s risk matrix theorem, whilst also serving as a demonstration of the utility of the IBIS notation in mapping complex arguments.

Acknowledgements:

Thanks go out to Tony Waisanen for suggesting that the post and comments be issue mapped, and to Glen Alleman, Robert Higgins and Prakash Vaidhyanathan for their contributions to the discussion.

When more knowledge means more uncertainty – a task correlation paradox and its resolution

Introduction

Project tasks can have a variety of dependencies. The most commonly encountered ones are task scheduling dependencies such as finish-to-start and start-to-start relationships which are available in many scheduling tools. However, other kinds of dependencies are possible too. For example, it can happen that the durations of two tasks are correlated in such a way that if one task takes longer or shorter than average, then so does the other. [Note: In statistics such a relationship between two quantities is called a positive correlation and an inverse relationship is termed a negative correlation]. In the absence of detailed knowledge of the relationship, one can model such duration dependencies through statistical correlation coefficients. In my previous post, I showed – via Monte Carlo simulations – that the uncertainty in the duration of a project increases if project task durations are positively correlated (the increase in uncertainty being relative to the uncorrelated case). At first sight this is counter-intuitive, even paradoxical. Knowing that tasks are correlated essentially amounts to more knowledge about the tasks as compared to the uncorrelated case. More knowledge should equate to less uncertainty, so one would expect the uncertainty to decrease compared to the uncorrelated case. This post discusses the paradox and its resolution using the example presented in the previous post.

I’ll begin with a brief recapitulation of the main points of the previous post and then discuss the paradox in some detail.

The example and the paradox

The “project” that I simulated consisted of two identical, triangularly distributed tasks performed sequentially. The triangular distribution for each of the tasks had the following parameters: minimum, most likely and maximum durations of 2, 4 and 8 days respectively. Simulations were carried out for two cases:

- No correlation between the two tasks.

- A correlation coefficient of 0.79 between the two tasks.

The simulations yielded probability distributions for overall completion times for the two cases. I then calculated the standard deviation for both distributions. The standard deviation is a measure of the “spread” or uncertainty represented by a distribution. The standard deviation for the correlated case turned out to be more than 30% larger than that for the uncorrelated case (2.33 and 1.77 days respectively), indicating that the probability distribution for the correlated case has a much wider spread than that for the uncorrelated case. The difference in spread can be seen quite clearly in figure 5 of my previous post, which depicts the frequency histograms for the two simulations (the frequency histograms are essentially proportional to the probability distribution). Note that the averages for the two cases are 9.34 and 9.32 days – statistically identical, as we might expect, because the tasks are identically distributed.

Why is the uncertainty (as measured by the standard deviation of the distribution) greater in the correlated case?

Here’s a brief explanation why. In the uncorrelated case, the outcome of the first task has no bearing on the outcome of the second. So if the first task takes longer than the average time (or more precisely, median time), the second one would have an even chance of finishing before the average time of the distribution. There is, therefore, a good chance in the uncorrelated case that overruns (underruns) in the first task will be cancelled out by underruns (overruns) in the second. This is essentially why the combined distribution for the uncorrelated case is more symmetric than that of the correlated case (see figure 5 of the previous post). In the correlated case, however, if the first task takes longer than the median time, chances are that the second task will take longer than the median too (with a similar argument holding for shorter-than-median times). The second task thus has an effect of amplifying the outcome of the first task. This effect becomes more pronounced as we move towards the extremes of the distribution, thus making extreme outcomes more likely than in the uncorrelated case. This has the effect of broadening the combined probability distribution – and hence the larger standard deviation.

Now, although the above explanation is technically correct, the sense that something’s not quite right remains: how can it be that knowing more about the tasks that make up a project results in increased overall uncertainty?

Resolving the paradox

The key to resolving the paradox lies in looking at the situation after task A has completed but B is yet to start. Let’s look at this in some detail.

Consider the uncorrelated case first. The two tasks are independent, so after A completes, we still know nothing more about the possible duration of B other than that it is triangularly distributed with min, max and most likely times of 2, 4 and 8 days. In the correlated case, however, the duration of B tracks the duration of A – that is, if A takes a long (or short) time then so will B. So, after A has completed, we have a pretty good idea of how long B will take. Our knowledge of the correlation works to reduce the uncertainty in B – but only after A is done.

One can also frame the argument in terms of conditional probability.

In the uncorrelated case, the probability distribution of B – let’s call it p(B) – is independent of A. So the conditional probability of B given that A has already finished (often denoted as P(B|A)) is identical to P(B). That is, there is no change in our knowledge of B after A has completed. Remember that we know p(B) – it is a triangular distribution with min, max and most likely completion times of 2, 4 and 8 days respectively. In the correlated case, however, P(B|A) is not the same as P(B) – the knowledge that A has completed has a huge bearing on the distribution of B. Even if one does not know the conditional distribution of B, one can say with some certainty that outcomes close to the duration of A are very likely, and outcomes substantially different from A are highly unlikely. The degree of “unlikeliness” – and the consequent shape of the distribution – depends on the value of the correlation coefficient.

Endnote

So we see that, on the one hand, positive correlations between tasks increase uncertainty in the overall duration of the two tasks. This happens because a wider range of outcomes are possible when the tasks are correlated. On the other hand knowledge of the correlation can also reduce uncertainty – but only after one of the correlated tasks is done. There is no paradox here, its all a question of where we are on the project timeline.

Of course, one can argue that the paradox is an artefact of the assumption that the two tasks remain triangularly distributed in the correlated case. It is far from obvious that this assumption is correct, and it is hard to validate in the real world. That said, I should add that most commercially available simulation tools treat correlations in much the same way as I have done in my previous post – see this article from the @Risk knowledge base, for example.

In the end, though, even if the paradox is only an artefact of modelling and has no real world application, it is still a good pedagogic example of how probability distributions can combine to give counter-intuitive results.

Acknowledgement:

Thanks to Vlado Bokan for several interesting conversations relating to this paradox.